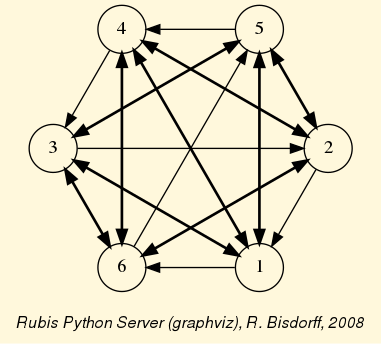

3. Pearls of bipolar-valued epistemic logic

- Author:

Raymond Bisdorff, Emeritus Professor of Applied Mathematics and Computer Science, University of Luxembourg

- Url:

- Version:

Python 3.14 (release: 3.14.5)

- PDF version:

- Copyright:

R. Bisdorff 2013-2026

- New:

In this part of the Digraph3 documentation, we provide an insight in computational enhancements one may get when working in a bipolar-valued epistemic logic framework, like - easily coping with missing data and uncertain criterion significance weights, - computing valued ordinal correlations between bipolar-valued outranking digraphs, - computing digraph kernels and solving bipolar-valued kernel equation systems, - testing for stability and confidence of outranking statements when facing uncertain performance criteria significance weights or decision objectives’ importance weights and, - applying bipolar-valued base 3 Bachet numbers for ranking multicriteria incommensurable performance records.

Contents

- Enhancing the outranking based MCDA approach

- Enhancing social choice procedures

- Theoretical and computational advancements

3.1. Enhancing the outranking based MCDA approach

“The goal of our research was to design a resolution method [..] that is easy to put into practice, that requires as few and reliable hypotheses as possible, and that meets the needs [of the decision maker].” – B. Roy et al. [13]

3.1.1. On confident outrankings with uncertain criteria significance weights

When modelling preferences following the outranking approach, the signs of the majority margins do sharply distribute validation and invalidation of pairwise outranking situations. How can we be confident in the resulting outranking digraph, when we acknowledge the usual imprecise knowledge of criteria significance weights coupled with small majority margins?

To answer this question, one usually requires qualified majority margins for confirming outranking situations. But how to choose such a qualifying majority level: two third, three fourth of the significance weights ?

In this tutorial we propose to link the qualifying significance majority with a required alpha%-confidence level. We model therefore the significance weights as random variables following more or less widespread distributions around an average significance value that corresponds to the given deterministic weight. As the bipolar-valued random credibility of an outranking statement hence results from the simple sum of positive or negative independent random variables, we may apply the Central Limit Theorem (CLT) for computing the bipolar likelihood that the expected majority margin will indeed be positive, respectively negative.

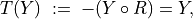

3.1.1.1. Modelling uncertain criteria significance weights

Let us consider the significance weights of a family F of m criteria to be independent random variables Wj, distributing the potential significance weights of each criterion j = 1, …, m around a mean value E(Wj) with variance V(Wj).

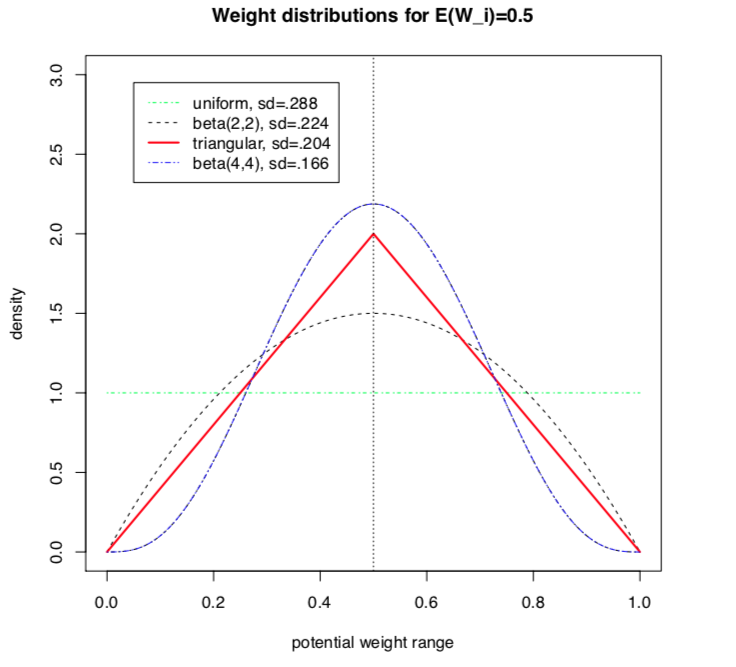

Choosing a specific stochastic model of uncertainty is usually application specific. In the limited scope of this tutorial, we will illustrate the consequence of this design decision on the resulting outranking modelling with four slightly different models for taking into account the uncertainty with which we know the numerical significance weights: uniform, triangular, and two models of Beta laws, one more widespread and, the other, more concentrated.

When considering, for instance, that the potential range of a significance weight is distributed between 0 and two times its mean value, we obtain the following random variates:

A continuous uniform distribution on the range 0 to 2E(Wj). Thus Wj ~ U(0, 2E(Wj)) and V(Wj) = 1/3(E(Wj))^2;

A symmetric beta distribution with, for instance, parameters alpha = 2 and beta = 2. Thus, Wi ~ Beta(2,2) * 2E(Wj) and V(Wj) = 1/5(E(Wj))^2.

A symmetric triangular distribution on the same range with mode E(Wj). Thus Wj ~ Tr(0, 2E(Wj), E(Wj)) with V(Wj) = 1/6(E(Wj))^2;

A narrower beta distribution with for instance parameters alpha = 4 and beta = 4. Thus Wj ~ Beta(4,4) * 2E(Wj) , V(Wj) = 1/9(E(Wj))^2.

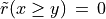

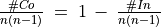

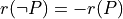

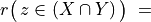

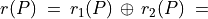

Fig. 3.1 Four models of uncertain significance weights

It is worthwhile noticing that these four uncertainty models all admit the same expected value, E(Wj), however, with a respective variance which goes decreasing from 1/3, to 1/9 of the square of E(W) (see Fig. 3.1).

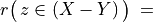

3.1.1.2. Bipolar-valued likelihood of ‘’at least as good as “ situations

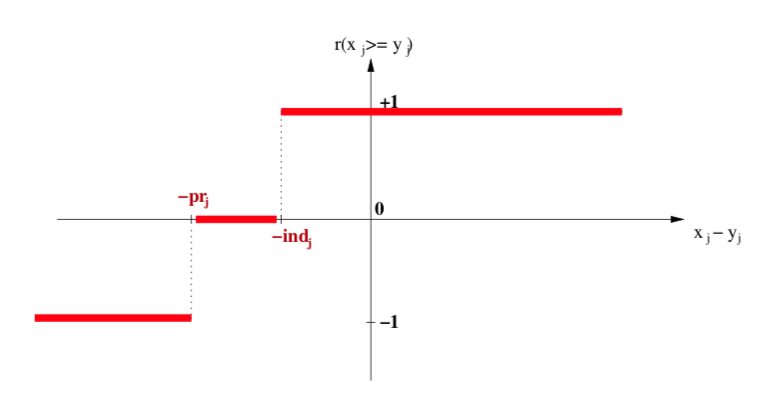

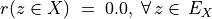

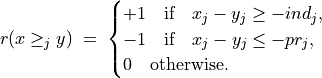

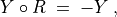

Let A = {x, y, z,…} be a finite set of n potential decision actions, evaluated on F = {1,…, m}, a finite and coherent family of m performance criteria. On each criterion j in F, the decision actions are evaluated on a real performance scale [0; Mj ], supporting an upper-closed indifference threshold indj and a lower-closed preference threshold prj such that 0 <= indj < prj <= Mj. The marginal performance of object x on criterion j is denoted xj. Each criterion j is thus characterising a marginal double threshold order  on A (see Fig. 3.2):

on A (see Fig. 3.2):

- Semantics of the marginal bipolar-valued characteristic function:

+1 signifies x is performing at least as good as y on criterion j,

-1 signifies that x is not performing at least as good as y on criterion j,

0 signifies that it is unclear whether, on criterion j, x is performing at least as good as y.

Fig. 3.2 Bipolar-valued outranking characteristic function

Each criterion j in F contributes the random significance Wj of his ‘at least as good as’ characteristic  to the global characteristic

to the global characteristic  in the following way:

in the following way:

Thus,  becomes a simple sum of positive or negative independent random variables with known means and variances where

becomes a simple sum of positive or negative independent random variables with known means and variances where  signifies x is globally performing at least as good as y,

signifies x is globally performing at least as good as y,  signifies that x is not globally performing at least as good as y, and

signifies that x is not globally performing at least as good as y, and  signifies that it is unclear whether x is globally performing at least as good as y.

signifies that it is unclear whether x is globally performing at least as good as y.

From the Central Limit Theorem (CLT), we know that such a sum of random variables leads, with m getting large, to a Gaussian distribution Y with

, and

.

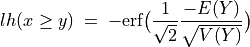

And the likelihood of validation, respectively invalidation of an ‘at least as good as’ situation, denoted  , may hence be assessed by the probability P(Y>0) = 1.0 - P(Y<=0) that Y takes a positive, resp. P(Y<0) takes a negative value. In the bipolar-valued case here, we can judiciously make usage of the standard Gaussian error function , i.e. the bipolar 2P(Z) - 1.0 version of the standard Gaussian P(Z) probability distribution function:

, may hence be assessed by the probability P(Y>0) = 1.0 - P(Y<=0) that Y takes a positive, resp. P(Y<0) takes a negative value. In the bipolar-valued case here, we can judiciously make usage of the standard Gaussian error function , i.e. the bipolar 2P(Z) - 1.0 version of the standard Gaussian P(Z) probability distribution function:

The range of the bipolar-valued  hence becomes [-1.0;+1.0], and

hence becomes [-1.0;+1.0], and  , i.e. a negative likelihood represents the likelihood of the correspondent negated ‘at least as good as’ situation. A likelihood of +1.0 (resp. -1.0) means the corresponding preferential situation appears certainly validated (resp. invalidated).

, i.e. a negative likelihood represents the likelihood of the correspondent negated ‘at least as good as’ situation. A likelihood of +1.0 (resp. -1.0) means the corresponding preferential situation appears certainly validated (resp. invalidated).

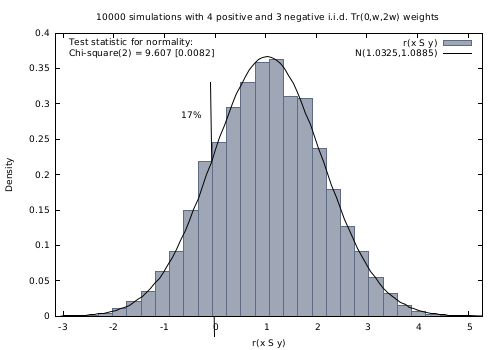

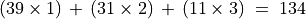

Example

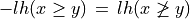

Let x and y be evaluated wrt 7 equisignificant criteria; Four criteria positively support that x is as least as good performing than y and three criteria support that x is not at least as good performing than y. Suppose E(Wj) = w for j = 1,…,7 and Wj ~ Tr(0, 2w, w) for j = 1,…7. The expected value of the global ‘at least as good as’ characteristic value becomes:  with a variance

with a variance  .

.

If w = 1,  and

and  . By the CLT, the bipolar likelihood of the at least as good performing situation becomes:

. By the CLT, the bipolar likelihood of the at least as good performing situation becomes:  , which corresponds to a probability of (0.66 + 1.0)/2 = 83% of being supported by a positive significance majority of criteria.

, which corresponds to a probability of (0.66 + 1.0)/2 = 83% of being supported by a positive significance majority of criteria.

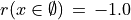

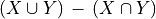

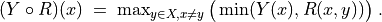

A Monte Carlo simulation with 10 000 runs empirically confirms the effective convergence to a Gaussian (see Fig. 3.3 realised with gretl [4] ).

Fig. 3.3 Distribution of 10 000 random outranking characteristic values

Indeed,  , with an empirical probability of observing a negative majority margin of about 17%.

, with an empirical probability of observing a negative majority margin of about 17%.

3.1.1.3. Confidence level of outranking situations

Now, following the classical outranking approach (see [BIS-2013p] ), we may say, from an epistemic perspective, that decision action x outranks decision action y at confidence level alpha %, when

an expected majority of criteria validates, at confidence level alpha % or higher, a global ‘at least as good as’ situation between x and y, and

no considerably less performing is observed on a discordant criterion.

Dually, decision action x does not outrank decision action y at confidence level alpha %, when

an expected majority of criteria at confidence level alpha % or higher, invalidates a global ‘at least as good as’ situation between x and y, and

no considerably better performing situation is observed on a concordant criterion.

Time for a coded example

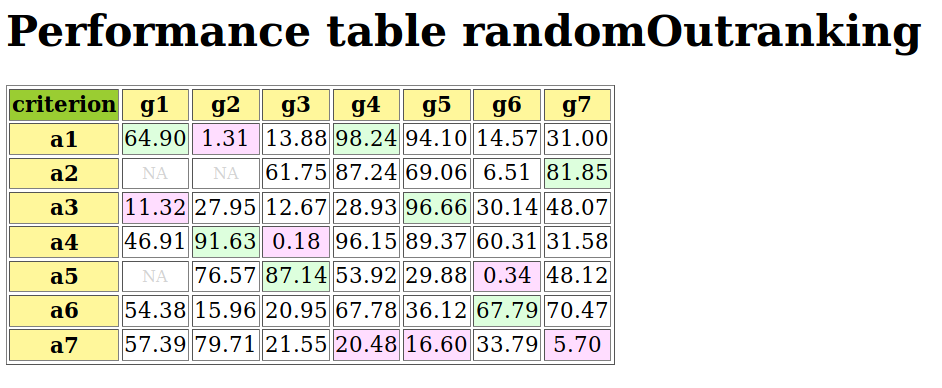

Let us consider the following random performance tableau.

1>>> from randomPerfTabs import RandomPerformanceTableau

2>>> t = RandomPerformanceTableau(

3... numberOfActions=7,

4... numberOfCriteria=7,seed=100)

5

6>>> t.showPerformanceTableau(Transposed=True)

7 *---- performance tableau -----*

8 criteria | weights | 'a1' 'a2' 'a3' 'a4' 'a5' 'a6' 'a7'

9 ---------|------------------------------------------------------------

10 'g1' | 1 | 15.17 44.51 57.87 58.00 24.22 29.10 96.58

11 'g2' | 1 | 82.29 43.90 NA 35.84 29.12 34.79 62.22

12 'g3' | 1 | 44.23 19.10 27.73 41.46 22.41 21.52 56.90

13 'g4' | 1 | 46.37 16.22 21.53 51.16 77.01 39.35 32.06

14 'g5' | 1 | 47.67 14.81 79.70 67.48 NA 90.72 80.16

15 'g6' | 1 | 69.62 45.49 22.03 33.83 31.83 NA 48.80

16 'g7' | 1 | 82.88 41.66 12.82 21.92 75.74 15.45 6.05

For the corresponding confident outranking digraph, we require a confidence level of alpha = 90%. The ConfidentBipolarOutrankingDigraph class provides such a construction.

1>>> from outrankingDigraphs import\

2... ConfidentBipolarOutrankingDigraph

3

4>>> g90 = ConfidentBipolarOutrankingDigraph(t,confidence=90)

5>>> print(g90)

6 *------- Object instance description ------*

7 Instance class : ConfidentBipolarOutrankingDigraph

8 Instance name : rel_randomperftab_CLT

9 # Actions : 7

10 # Criteria : 7

11 Size : 15

12 Uncertainty model : triangular(a=0,b=2w)

13 Likelihood domain : [-1.0;+1.0]

14 Confidence level : 0.80 (90.0%)

15 Confident credibility: > abs(0.143) (57.1%)

16 Determinateness (%) : 62.07

17 Valuation domain : [-1.00;1.00]

18 Attributes : ['name', 'bipolarConfidenceLevel',

19 'distribution', 'betaParameter', 'actions',

20 'order', 'valuationdomain', 'criteria',

21 'evaluation', 'concordanceRelation',

22 'vetos', 'negativeVetos',

23 'largePerformanceDifferencesCount',

24 'likelihoods', 'confidenceCutLevel',

25 'relation', 'gamma', 'notGamma']

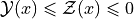

The resulting 90% confident expected outranking relation is shown below.

1>>> g90.showRelationTable(LikelihoodDenotation=True)

2 * ---- Outranking Relation Table -----

3 r/(lh) | 'a1' 'a2' 'a3' 'a4' 'a5' 'a6' 'a7'

4 -------|------------------------------------------------------------

5 'a1' | +0.00 +0.71 +0.29 +0.29 +0.29 +0.29 +0.00

6 | ( - ) (+1.00) (+0.95) (+0.95) (+0.95) (+0.95) (+0.65)

7 'a2' | -0.71 +0.00 -0.29 +0.00 +0.00 +0.29 -0.57

8 |(-1.00) ( - ) (-0.95) (-0.65) (+0.73) (+0.95) (-1.00)

9 'a3' | -0.29 +0.29 +0.00 -0.29 +0.00 +0.00 -0.29

10 |(-0.95) (+0.95) ( - ) (-0.95) (-0.73) (-0.00) (-0.95)

11 'a4' | +0.00 +0.00 +0.57 +0.00 +0.29 +0.57 -0.43

12 |(-0.00) (+0.65) (+1.00) ( - ) (+0.95) (+1.00) (-0.99)

13 'a5' | -0.29 +0.00 +0.00 +0.00 +0.00 +0.29 -0.29

14 |(-0.95) (-0.00) (+0.73) (-0.00) ( - ) (+0.99) (-0.95)

15 'a6' | -0.29 +0.00 +0.00 -0.29 +0.00 +0.00 +0.00

16 |(-0.95) (-0.00) (+0.73) (-0.95) (+0.73) ( - ) (-0.00)

17 'a7' | +0.00 +0.71 +0.57 +0.43 +0.29 +0.00 +0.00

18 |(-0.65) (+1.00) (+1.00) (+0.99) (+0.95) (-0.00) ( - )

19 Valuation domain : [-1.000; +1.000]

20 Uncertainty model : triangular(a=2.0,b=2.0)

21 Likelihood domain : [-1.0;+1.0]

22 Confidence level : 0.80 (90.0%)

23 Confident credibility : > abs(0.14) (57.1%)

24 Determinateness : 0.24 (62.1%)

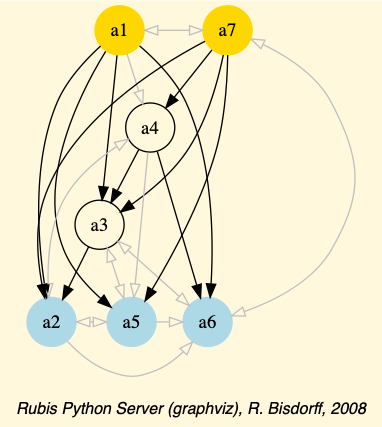

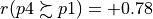

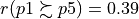

The (lh) figures, indicated in the table above, correspond to bipolar likelihoods and the required bipolar confidence level equals (0.90+1.0)/2 = 0.80 (see Line 22 above). Action ‘a1’ thus confidently outranks all other actions, except ‘a7’ where the actual likelihood (+0.65) is lower than the required one (0.80) and we furthermore observe a considerable counter-performance on criterion ‘g1’.

Notice also the lack of confidence in the outranking situations we observe between action ‘a2’ and actions ‘a4’ and ‘a5’. In the deterministic case we would have  and

and  . All outranking situations with a characteristic value lower or equal to abs(0.143), i.e. a majority support of 1.143/2 = 57.1% and less, appear indeed to be not confident at level 90% (see Line 23 above).

. All outranking situations with a characteristic value lower or equal to abs(0.143), i.e. a majority support of 1.143/2 = 57.1% and less, appear indeed to be not confident at level 90% (see Line 23 above).

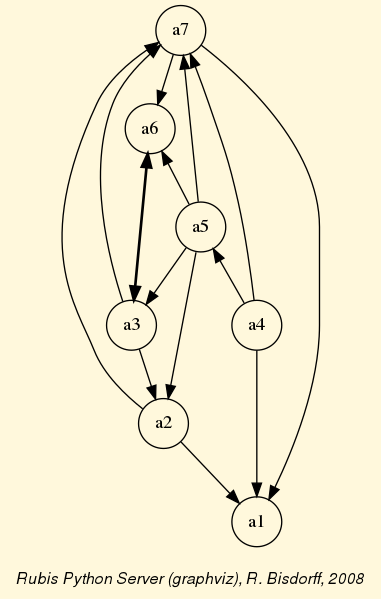

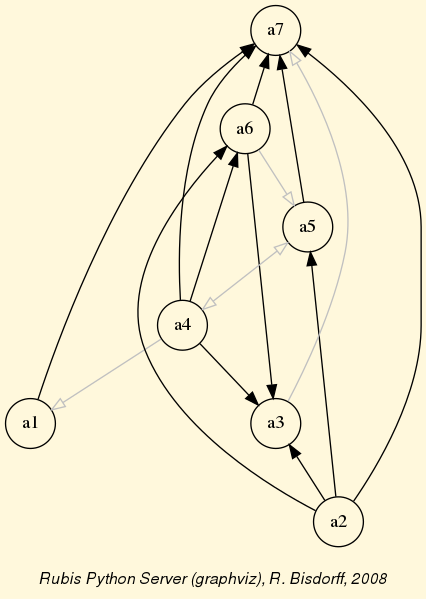

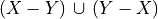

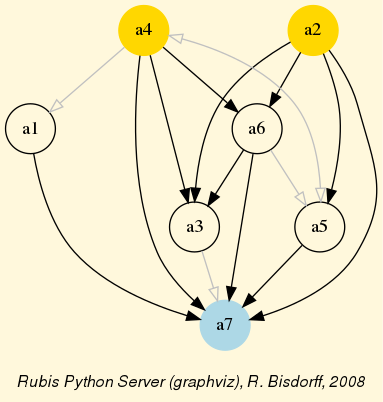

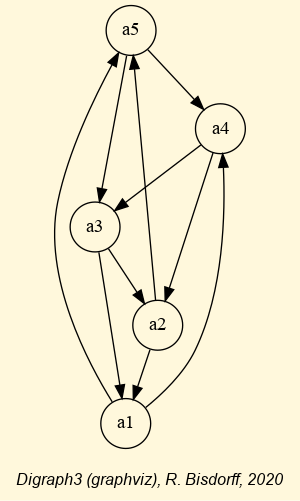

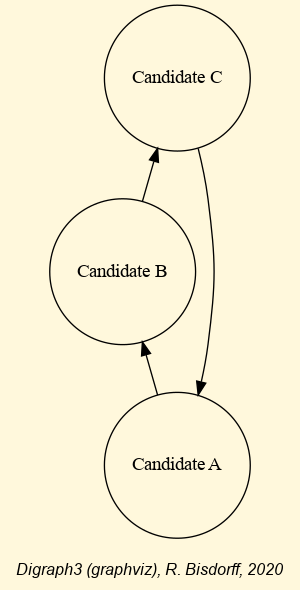

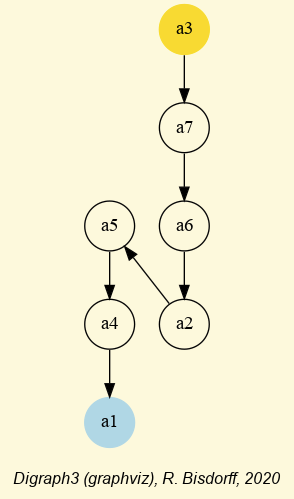

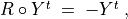

We may draw the corresponding strict 90%-confident outranking digraph, oriented by its initial and terminal strict prekernels (see Fig. 3.4).

1>>> gcd90 = ~ (-g90)

2>>> gcd90.showPreKernels()

3 *--- Computing preKernels ---*

4 Dominant preKernels :

5 ['a1', 'a7']

6 independence : 0.0

7 dominance : 0.2857

8 absorbency : -0.7143

9 covering : 0.800

10 Absorbent preKernels :

11 ['a2', 'a5', 'a6']

12 independence : 0.0

13 dominance : -0.2857

14 absorbency : 0.2857

15 covered : 0.583

16>>> gcd90.exportGraphViz(fileName='confidentOutranking',

17... firstChoice=['a1', 'a7'],lastChoice=['a2', 'a5', 'a6'])

18

19 *---- exporting a dot file for GraphViz tools ---------*

20 Exporting to confidentOutranking.dot

21 dot -Grankdir=BT -Tpng confidentOutranking.dot -o confidentOutranking.png

Fig. 3.4 Strict 90%-confident outranking digraph oriented by its prekernels

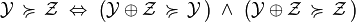

Now, what becomes this 90%-confident outranking digraph when we require a stronger confidence level of, say 99% ?

1>>> g99 = ConfidentBipolarOutrankingDigraph(t,confidence=99)

2>>> g99.showRelationTable()

3 * ---- Outranking Relation Table -----

4 r/(lh) | 'a1' 'a2' 'a3' 'a4' 'a5' 'a6' 'a7'

5 -------|------------------------------------------------------------

6 'a1' | +0.00 +0.71 +0.00 +0.00 +0.00 +0.00 +0.00

7 | ( - ) (+1.00) (+0.95) (+0.95) (+0.95) (+0.95) (+0.65)

8 'a2' | -0.71 +0.00 +0.00 +0.00 +0.00 +0.00 -0.57

9 | (-1.00) ( - ) (-0.95) (-0.65) (+0.73) (+0.95) (-1.00)

10 'a3' | +0.00 +0.00 +0.00 +0.00 +0.00 +0.00 +0.00

11 | (-0.95) (+0.95) ( - ) (-0.95) (-0.73) (-0.00) (-0.95)

12 'a4' | +0.00 +0.00 +0.57 +0.00 +0.00 +0.57 -0.43

13 | (-0.00) (+0.65) (+1.00) ( - ) (+0.95) (+1.00) (-0.99)

14 'a5' | +0.00 +0.00 +0.00 +0.00 +0.00 +0.29 +0.00

15 | (-0.95) (-0.00) (+0.73) (-0.00) ( - ) (+0.99) (-0.95)

16 'a6' | +0.00 +0.00 +0.00 +0.00 +0.00 +0.00 +0.00

17 | (-0.95) (-0.00) (+0.73) (-0.95) (+0.73) ( - ) (-0.00)

18 'a7' | +0.00 +0.71 +0.57 +0.43 +0.00 +0.00 +0.00

19 | (-0.65) (+1.00) (+1.00) (+0.99) (+0.95) (-0.00) ( - )

20 Valuation domain : [-1.000; +1.000]

21 Uncertainty model : triangular(a=2.0,b=2.0)

22 Likelihood domain : [-1.0;+1.0]

23 Confidence level : 0.98 (99.0%)

24 Confident credibility : > abs(0.286) (64.3%)

25 Determinateness : 0.13 (56.6%)

At 99% confidence, the minimal required significance majority support amounts to 64.3% (see Line 24 above). As a result, most outranking situations don’t get anymore validated, like the outranking situations between action ‘a1’ and actions ‘a3’, ‘a4’, ‘a5’ and ‘a6’ (see Line 5 above). The overall epistemic determination of the digraph consequently drops from 62.1% to 56.6% (see Line 25).

Finally, what becomes the previous 90%-confident outranking digraph if the uncertainty concerning the criteria significance weights is modelled with a larger variance, like uniform variates (see Line 2 below).

1>>> gu90 = ConfidentBipolarOutrankingDigraph(t,

2... confidence=90,distribution='uniform')

3

4>>> gu90.showRelationTable()

5 * ---- Outranking Relation Table -----

6 r/(lh) | 'a1' 'a2' 'a3' 'a4' 'a5' 'a6' 'a7'

7 -------|------------------------------------------------------------

8 'a1' | +0.00 +0.71 +0.29 +0.29 +0.29 +0.29 +0.00

9 | ( - ) (+1.00) (+0.84) (+0.84) (+0.84) (+0.84) (+0.49)

10 'a2' | -0.71 +0.00 -0.29 +0.00 +0.00 +0.29 -0.57

11 | (-1.00) ( - ) (-0.84) (-0.49) (+0.56) (+0.84) (-1.00)

12 'a3' | -0.29 +0.29 +0.00 -0.29 +0.00 +0.00 -0.29

13 | (-0.84) (+0.84) ( - ) (-0.84) (-0.56) (-0.00) (-0.84)

14 'a4' | +0.00 +0.00 +0.57 +0.00 +0.29 +0.57 -0.43

15 | (-0.00) (+0.49) (+1.00) ( - ) (+0.84) (+1.00) (-0.95)

16 'a5' | -0.29 +0.00 +0.00 +0.00 +0.00 +0.29 -0.29

17 | (-0.84) (-0.00) (+0.56) (-0.00) ( - ) (+0.92) (-0.84)

18 'a6' | -0.29 +0.00 +0.00 -0.29 +0.00 +0.00 +0.00

19 | (-0.84) (-0.00) (+0.56) (-0.84) (+0.56) ( - ) (-0.00)

20 'a7' | +0.00 +0.71 +0.57 +0.43 +0.29 +0.00 +0.00

21 | (-0.49) (+1.00) (+1.00) (+0.95) (+0.84) (-0.00) ( - )

22 Valuation domain : [-1.000; +1.000]

23 Uncertainty model : uniform(a=2.0,b=2.0)

24 Likelihood domain : [-1.0;+1.0]

25 Confidence level : 0.80 (90.0%)

26 Confident majority : 0.14 (57.1%)

27 Determinateness : 0.24 (62.1%)

Despite lower likelihood values (see the g90 relation table above), we keep the same confident majority level of 57.1% (see Line 25 above) and, hence, also the same 90%-confident outranking digraph.

Note

For concluding, it is worthwhile noticing again that it is in fact the neutral value of our bipolar-valued epistemic logic that allows us to easily handle alpha% confidence or not of outranking situations when confronted with uncertain criteria significance weights. Remarkable furthermore is the usage, the standard Gaussian error function (erf) provides by delivering signed likelihood values immediately concerning either a positive relational statement, or when negative, its negated version.

Back to Content Table

3.1.2. On stable outrankings with ordinal criteria significance weights

3.1.2.1. Cardinal or ordinal criteria significance weights

The required cardinal significance weights of the performance criteria represent the Achilles’ heel of the outranking approach. Rarely will indeed a decision maker be cognitively competent for suggesting precise decimal-valued criteria significance weights. More often, the decision problem will involve more or less equally important decision objectives with more or less equi-significant criteria. A random example of such a decision problem may be generated with the Random3ObjectivesPerformanceTableau class.

1>>> from randomPerfTabs import \

2... Random3ObjectivesPerformanceTableau

3

4>>> t = Random3ObjectivesPerformanceTableau(

5... numberOfActions=7,

6... numberOfCriteria=9,seed=102)

7

8>>> t

9 *------- PerformanceTableau instance description ------*

10 Instance class : Random3ObjectivesPerformanceTableau

11 Seed : 102

12 Instance name : random3ObjectivesPerfTab

13 # Actions : 7

14 # Objectives : 3

15 # Criteria : 9

16 Attributes : ['name', 'valueDigits', 'BigData', 'OrdinalScales',

17 'missingDataProbability', 'negativeWeightProbability',

18 'randomSeed', 'sumWeights', 'valuationPrecision',

19 'commonScale', 'objectiveSupportingTypes', 'actions',

20 'objectives', 'criteriaWeightMode', 'criteria',

21 'evaluation', 'weightPreorder']

22>>> t.showObjectives()

23 *------ show objectives -------"

24 Eco: Economical aspect

25 ec1 criterion of objective Eco 8

26 ec4 criterion of objective Eco 8

27 ec8 criterion of objective Eco 8

28 Total weight: 24.00 (3 criteria)

29 Soc: Societal aspect

30 so2 criterion of objective Soc 12

31 so7 criterion of objective Soc 12

32 Total weight: 24.00 (2 criteria)

33 Env: Environmental aspect

34 en3 criterion of objective Env 6

35 en5 criterion of objective Env 6

36 en6 criterion of objective Env 6

37 en9 criterion of objective Env 6

38 Total weight: 24.00 (4 criteria)

In this example (see Listing 3.1), we face seven decision alternatives that are assessed with respect to three equally important decision objectives concerning: first, an economical aspect (Line 24) with a coalition of three performance criteria of significance weight 8, secondly, a societal aspect (Line 29) with a coalition of two performance criteria of significance weight 12, and thirdly, an environmental aspect (Line 33) with a coalition four performance criteria of significance weight 6.

The question we tackle is the following: How dependent on the actual values of the significance weights appears the corresponding bipolar-valued outranking digraph ? In the previous section, we assumed that the criteria significance weights were random variables. Here, we shall assume that we know for sure only the preordering of the significance weights. In our example we see indeed three increasing weight equivalence classes (Listing 3.2).

1>>> t.showWeightPreorder()

2 ['en3', 'en5', 'en6', 'en9'] (6) <

3 ['ec1', 'ec4', 'ec8'] (8) <

4 ['so2', 'so7'] (12)

How stable appear now the outranking situations when assuming only ordinal significance weights?

3.1.2.2. Qualifying the stability of outranking situations

Let us construct the normalized bipolar-valued outranking digraph corresponding with the previous 3 Objectives performance tableau t.

1>>> from outrankingDigraphs import BipolarOutrankingDigraph

2>>> g = BipolarOutrankingDigraph(t,Normalized=True)

3>>> g.showRelationTable()

4 * ---- Relation Table -----

5 r(>=) | 'p1' 'p2' 'p3' 'p4' 'p5' 'p6' 'p7'

6 ------|------------------------------------------------

7 'p1' | +1.00 -0.42 +0.00 -0.69 +0.39 +0.11 -0.06

8 'p2' | +0.58 +1.00 +0.83 +0.00 +0.58 +0.58 +0.58

9 'p3' | +0.25 -0.33 +1.00 +0.00 +0.50 +1.00 +0.25

10 'p4' | +0.78 +0.00 +0.61 +1.00 +1.00 +1.00 +0.67

11 'p5' | -0.11 -0.50 -0.25 -0.89 +1.00 +0.11 -0.14

12 'p6' | +0.22 -0.42 +0.00 -1.00 +0.17 +1.00 -0.11

13 'p7' | +0.22 -0.50 +0.17 -0.06 +0.78 +0.42 +1.00

We notice on the principal diagonal, the certainly validated reflexive terms +1.00 (see Listing 3.3 Lines 7-13). Now, we know for sure that unanimous outranking situations are completely independent of the significance weights. Similarly, all outranking situations that are supported by a majority significance in each coalition of equi-significant criteria are also in fact independent of the actual importance we attach to each individual criteria coalition. But we are also able to test (see [BIS-2014p]) if an outranking situation is independent of all the potential significance weights that respect the given preordering of the weights. Mind that there are, for sure, always outranking situations that are indeed dependent on the very values we allocate to the criteria significance weights.

Such a stability denotation of outranking situations is readily available with the common showRelationTable() method.

1>>> g.showRelationTable(StabilityDenotation=True)

2* ---- Relation Table -----

3r/(stab) | 'p1' 'p2' 'p3' 'p4' 'p5' 'p6' 'p7'

4----------|------------------------------------------

5 'p1' | +1.00 -0.42 +0.00 -0.69 +0.39 +0.11 -0.06

6 | (+4) (-2) (+0) (-3) (+2) (+2) (-1)

7 'p2' | +0.58 +1.00 +0.83 0.00 +0.58 +0.58 +0.58

8 | (+2) (+4) (+3) (+2) (+2) (+2) (+2)

9 'p3' | +0.25 -0.33 +1.00 0.00 +0.50 +1.00 +0.25

10 | (+2) (-2) (+4) (0) (+2) (+2) (+1)

11 'p4' | +0.78 0.00 +0.61 +1.00 +1.00 +1.00 +0.67

12 | (+3) (-1) (+3) (+4) (+4) (+4) (+2)

13 'p5' | -0.11 -0.50 -0.25 -0.89 +1.00 +0.11 -0.14

14 | (-2) (-2) (-2) (-3) (+4) (+2) (-2)

15 'p6' | +0.22 -0.42 0.00 -1.00 +0.17 +1.00 -0.11

16 | (+2) (-2) (+1) (-2) (+2) (+4) (-2)

17 'p7' | +0.22 -0.50 +0.17 -0.06 +0.78 +0.42 +1.00

18 | (+2) (-2) (+1) (-1) (+3) (+2) (+4)

- We may thus distinguish the following bipolar-valued stability levels:

+4 | -4 : unanimous outranking | outranked situation. The pairwise trivial reflexive outrankings, for instance, all show this stability level;

+3 | -3 : validated outranking | outranked situation in each coalition of equisignificant criteria. This is, for instance, the case for the outranking situation observed between alternatives p1 and p4 (see Listing 3.4 Lines 6 and 12);

+2 | -2 : outranking | outranked situation validated with all potential significance weights that are compatible with the given significance preorder (see Listing 3.2. This is case for the comparison of alternatives p1 and p2 (see Listing 3.4 Lines 6 and 8);

+1 | -1 : validated outranking | outranked situation with the given significance weights, a situation we may observe between alternatives p3 and p7 (see Listing 3.4 Lines 10 and 16);

0 : indeterminate relational situation, like the one between alternatives p1 and p3 (see Listing 3.4 Lines 6 and 10).

It is worthwhile noticing that, in the one limit case where all performance criteria appear equi-significant, i.e. there is given a single equivalence class containing all the performance criteria, we may only distinguish stability levels +4 and +3 (rep. -4 and -3). Furthermore, when in such a case an outranking (resp. outranked) situation is validated at level +3 (resp. -3), no potential preordering of the criteria significance weights exists that could qualify the same situation as outranked (resp. outranking) at level -2 (resp. +2).

In the other limit case, when all performance criteria admit different significance weights, i.e. the significance weights may be linearly ordered, no stability level +3 or -3 may be observed.

As mentioned above, all reflexive comparisons confirm an unanimous outranking situation: all decision alternatives are indeed trivially as well performing as themselves. But there appear also two non reflexive unanimous outranking situations: when comparing, for instance, alternative p4 with alternatives p5 and p6 (see Listing 3.4 Lines 14 and 16).

Let us inspect the details of how alternatives p4 and p5 compare.

1>>> g.showPairwiseComparison('p4','p5')

2 *------------ pairwise comparison ----*

3 Comparing actions : (p4, p5)

4 crit. wght. g(x) g(y) diff | ind pref r() |

5 ec1 8.00 85.19 46.75 +38.44 | 5.00 10.00 +8.00 |

6 ec4 8.00 72.26 8.96 +63.30 | 5.00 10.00 +8.00 |

7 ec8 8.00 44.62 35.91 +8.71 | 5.00 10.00 +8.00 |

8 en3 6.00 80.81 31.05 +49.76 | 5.00 10.00 +6.00 |

9 en5 6.00 49.69 29.52 +20.17 | 5.00 10.00 +6.00 |

10 en6 6.00 66.21 31.22 +34.99 | 5.00 10.00 +6.00 |

11 en9 6.00 50.92 9.83 +41.09 | 5.00 10.00 +6.00 |

12 so2 12.00 49.05 12.36 +36.69 | 5.00 10.00 +12.00 |

13 so7 12.00 55.57 44.92 +10.65 | 5.00 10.00 +12.00 |

14 Valuation in range: -72.00 to +72.00; global concordance: +72.00

Alternative p4 is indeed performing unanimously at least as well as alternative p5: r(p4 outranks p5) = +1.00 (see Listing 3.4 Line 11).

The converse comparison does not, however, deliver such an unanimous outranked situation. This comparison only qualifies at stability level -3 (see Listing 3.4 Line 13 r(p5 outranks p4) = 0.89).

1>>> g.showPairwiseComparison('p5','p4')

2 *------------ pairwise comparison ----*

3 Comparing actions : (p5, p4)

4 crit. wght. g(x) g(y) diff | ind pref r() |

5 ec1 8.00 46.75 85.19 -38.44 | 5.00 10.00 -8.00 |

6 ec4 8.00 8.96 72.26 -63.30 | 5.00 10.00 -8.00 |

7 ec8 8.00 35.91 44.62 -8.71 | 5.00 10.00 +0.00 |

8 en3 6.00 31.05 80.81 -49.76 | 5.00 10.00 -6.00 |

9 en5 6.00 29.52 49.69 -20.17 | 5.00 10.00 -6.00 |

10 en6 6.00 31.22 66.21 -34.99 | 5.00 10.00 -6.00 |

11 en9 6.00 9.83 50.92 -41.09 | 5.00 10.00 -6.00 |

12 so2 12.00 12.36 49.05 -36.69 | 5.00 10.00 -12.00 |

13 so7 12.00 44.92 55.57 -10.65 | 5.00 10.00 -12.00 |

14 Valuation in range: -72.00 to +72.00; global concordance: -64.00

Indeed, on criterion ec8 we observe a small negative performance difference of -8.71 (see Listing 3.6 Line 7) which is effectively below the supposed preference discrimination threshold of 10.00. Yet, the outranked situation is supported by a majority of criteria in each decision objective. Hence, the reported preferential situation is completely independent of any chosen significance weights.

Let us now consider a comparison, like the one between alternatives p2 and p1, that is only qualified at stability level +2, resp. -2.

1>>> g.showPairwiseOutrankings('p2','p1')

2 *------------ pairwise comparison ----*

3 Comparing actions : (p2, p1)

4 crit. wght. g(x) g(y) diff | ind pref r() |

5 ec1 8.00 89.77 38.11 +51.66 | 5.00 10.00 +8.00 |

6 ec4 8.00 86.00 22.65 +63.35 | 5.00 10.00 +8.00 |

7 ec8 8.00 89.43 77.02 +12.41 | 5.00 10.00 +8.00 |

8 en3 6.00 20.79 58.16 -37.37 | 5.00 10.00 -6.00 |

9 en5 6.00 23.83 31.40 -7.57 | 5.00 10.00 +0.00 |

10 en6 6.00 18.66 11.41 +7.25 | 5.00 10.00 +6.00 |

11 en9 6.00 26.65 44.37 -17.72 | 5.00 10.00 -6.00 |

12 so2 12.00 89.12 22.43 +66.69 | 5.00 10.00 +12.00 |

13 so7 12.00 84.73 28.41 +56.32 | 5.00 10.00 +12.00 |

14 Valuation in range: -72.00 to +72.00; global concordance: +42.00

15 *------------ pairwise comparison ----*

16 Comparing actions : (p1, p2)

17 crit. wght. g(x) g(y) diff | ind pref r() |

18 ec1 8.00 38.11 89.77 -51.66 | 5.00 10.00 -8.00 |

19 ec4 8.00 22.65 86.00 -63.35 | 5.00 10.00 -8.00 |

20 ec8 8.00 77.02 89.43 -12.41 | 5.00 10.00 -8.00 |

21 en3 6.00 58.16 20.79 +37.37 | 5.00 10.00 +6.00 |

22 en5 6.00 31.40 23.83 +7.57 | 5.00 10.00 +6.00 |

23 en6 6.00 11.41 18.66 -7.25 | 5.00 10.00 +0.00 |

24 en9 6.00 44.37 26.65 +17.72 | 5.00 10.00 +6.00 |

25 so2 12.00 22.43 89.12 -66.69 | 5.00 10.00 -12.00 |

26 so7 12.00 28.41 84.73 -56.32 | 5.00 10.00 -12.00 |

27 Valuation in range: -72.00 to +72.00; global concordance: -30.00

In both comparisons, the performances observed with respect to the environmental decision objective are not validating with a significant majority the otherwise unanimous outranking, resp. outranked situations. Hence, the stability of the reported preferential situations is in fact dependent on choosing significance weights that are compatible with the given significance weights preorder (see Significance weights preorder).

Let us finally inspect a comparison that is only qualified at stability level +1, like the one between alternatives p7 and p3 (see Listing 3.8).

1>>> g.showPairwiseOutrankings('p7','p3')

2*------------ pairwise comparison ----*

3Comparing actions : (p7, p3)

4crit. wght. g(x) g(y) diff | ind pref r() |

5ec1 8.00 15.33 80.19 -64.86 | 5.00 10.00 -8.00 |

6ec4 8.00 36.31 68.70 -32.39 | 5.00 10.00 -8.00 |

7ec8 8.00 38.31 91.94 -53.63 | 5.00 10.00 -8.00 |

8en3 6.00 30.70 46.78 -16.08 | 5.00 10.00 -6.00 |

9en5 6.00 35.52 27.25 +8.27 | 5.00 10.00 +6.00 |

10en6 6.00 69.71 1.65 +68.06 | 5.00 10.00 +6.00 |

11en9 6.00 13.10 14.85 -1.75 | 5.00 10.00 +6.00 |

12so2 12.00 68.06 58.85 +9.21 | 5.00 10.00 +12.00 |

13so7 12.00 58.45 15.49 +42.96 | 5.00 10.00 +12.00 |

14Valuation in range: -72.00 to +72.00; global concordance: +12.00

15*------------ pairwise comparison ----*

16Comparing actions : (p3, p7)

17crit. wght. g(x) g(y) diff | ind pref r() |

18ec1 8.00 80.19 15.33 +64.86 | 5.00 10.00 +8.00 |

19ec4 8.00 68.70 36.31 +32.39 | 5.00 10.00 +8.00 |

20ec8 8.00 91.94 38.31 +53.63 | 5.00 10.00 +8.00 |

21en3 6.00 46.78 30.70 +16.08 | 5.00 10.00 +6.00 |

22en5 6.00 27.25 35.52 -8.27 | 5.00 10.00 +0.00 |

23en6 6.00 1.65 69.71 -68.06 | 5.00 10.00 -6.00 |

24en9 6.00 14.85 13.10 +1.75 | 5.00 10.00 +6.00 |

25so2 12.00 58.85 68.06 -9.21 | 5.00 10.00 +0.00 |

26so7 12.00 15.49 58.45 -42.96 | 5.00 10.00 -12.00 |

27Valuation in range: -72.00 to +72.00; global concordance: +18.00

In both cases, choosing significance weights that are just compatible with the given weights preorder will not always result in positively validated outranking situations.

3.1.2.3. Computing the stability denotation of outranking situations

Stability levels 4 and 3 are easy to detect, the case given. Detecting a stability level 2 is far less obvious. Now, it is precisely again the bipolar-valued epistemic characteristic domain that will give us a way to implement an effective test for stability level +2 and -2 (see [BIS-2004_1p], [BIS-2004_2p]).

Let us consider the significance equivalence classes we observe in the given weights preorder. Here we observe three classes: 6, 8, and 12, in increasing order (see Listing 3.2). In the pairwise comparisons shown above these equivalence classes may appear positively or negatively, besides the indeterminate significance of value 0. We thus get the following ordered bipolar list of significance weights:

W = [-12. -8. -6, 0, 6, 8, 12].

In all the pairwise marginal comparisons shown in the previous Section, we may observe that each one of the nine criteria assigns one precise item out of this list W. Let us denote q[i] the number of criteria assigning item W[i], and Q[i] the cumulative sums of these q[i] counts, where i is an index in the range of the length of list W.

In the comparison of alternatives a2 and a1, for instance (see Listing 3.7), we observe the following counts:

W[i] |

-12 |

-8 |

-6 |

0 |

6 |

8 |

12 |

|---|---|---|---|---|---|---|---|

q[i] |

0 |

0 |

2 |

1 |

1 |

3 |

2 |

Q[i] |

0 |

0 |

2 |

3 |

4 |

7 |

9 |

Let use denote -q and -Q the reversed versions of the q and the Q lists. We thus obtain the following result.

W[i] |

-12 |

-8 |

-6 |

0 |

6 |

8 |

12 |

|---|---|---|---|---|---|---|---|

-q[i] |

2 |

3 |

1 |

1 |

2 |

0 |

0 |

-Q[i] |

2 |

5 |

6 |

7 |

9 |

9 |

9 |

Now, a pairwise outranking situation will be qualified at stability level +2, i.e. positively validated with any significance weights that are compatible with the given weights preorder, when for all i, we observe Q[i] <= -Q[i] and there exists one i such that Q[i] < -Q[i]. Similarly, a pairwise outranked situation will be qualified at stability level -2, when for all i, we observe Q[i] >= -Q[i] and there exists one i such that Q[i] > -Q[i] (see [BIS-2004_2p]).

We may verify, for instance, that the outranking situation observed between a2 and a1 does indeed verify this first order distributional dominance condition.

W[i] |

-12 |

-8 |

-6 |

0 |

6 |

8 |

12 |

|---|---|---|---|---|---|---|---|

Q[i] |

0 |

0 |

2 |

3 |

4 |

7 |

9 |

-Q[i] |

2 |

5 |

6 |

7 |

9 |

9 |

9 |

Notice that outranking situations qualified at stability levels 4 and 3, evidently also verify the stability level 2 test above. The outranking situation between alternatives a7 and a3 does not, however, verify this test (see Listing 3.8).

W[i] |

-12 |

-8 |

-6 |

0 |

6 |

8 |

12 |

|---|---|---|---|---|---|---|---|

q[i] |

0 |

3 |

1 |

0 |

3 |

0 |

2 |

Q[i] |

0 |

3 |

4 |

4 |

7 |

7 |

9 |

-Q[i] |

2 |

2 |

5 |

5 |

6 |

9 |

9 |

This time, not all the Q[i] are lower or equal than the corresponding -Q[i] terms. Hence the outranking situation between a7 and a3 is not positively validated with all potential significance weights that are compatible with the given weights preorder.

Using this stability denotation, we may, hence, define the following robust version of a bipolar-valued outranking digraph.

3.1.2.4. Robust bipolar-valued outranking digraphs

We say that decision alternative x robustly outranks decision alternative y when

x positively outranks y at stability level higher or equal to 2 and we may not observe any considerable counter-performance of x on a discordant criterion.

Dually, we say that decision alternative x does not robustly outrank decision alternative y when

x negatively outranks y at stability level lower or equal to -2 and we may not observe any considerable better performance of x on a discordant criterion.

The corresponding robust outranking digraph may be computed with the RobustOutrankingDigraph class as follows.

1>>> from outrankingDigraphs import RobustOutrankingDigraph

2>>> rg = RobustOutrankingDigraph(t) # same t as before

3>>> rg

4 *------- Object instance description ------*

5 Instance class : RobustOutrankingDigraph

6 Instance name : robust_random3ObjectivesPerfTab

7 # Actions : 7

8 # Criteria : 9

9 Size : 22

10 Determinateness (%) : 68.45

11 Valuation domain : [-1.00;1.00]

12 Attributes : ['name', 'methodData', 'actions', 'order',

13 'criteria', 'evaluation', 'vetos',

14 'valuationdomain', 'cardinalRelation',

15 'ordinalRelation', 'equisignificantRelation',

16 'unanimousRelation', 'relation',

17 'gamma', 'notGamma']

18>>> rg.showRelationTable(StabilityDenotation=True)

19 * ---- Relation Table -----

20 r/(stab) | 'p1' 'p2' 'p3' 'p4' 'p5' 'p6' 'p7'

21 ---------|------------------------------------------------------------

22 'p1' | +1.00 -0.42 +0.00 -0.69 +0.39 +0.11 +0.00

23 | (+4) (-2) (+0) (-3) (+2) (+2) (-1)

24 'p2' | +0.58 +1.00 +0.83 +0.00 +0.58 +0.58 +0.58

25 | (+2) (+4) (+3) (+2) (+2) (+2) (+2)

26 'p3' | +0.25 -0.33 +1.00 +0.00 +0.50 +1.00 +0.00

27 | (+2) (-2) (+4) (+0) (+2) (+2) (+1)

28 'p4' | +0.78 +0.00 +0.61 +1.00 +1.00 +1.00 +0.67

29 | (+3) (-1) (+3) (+4) (+4) (+4) (+2)

30 'p5' | -0.11 -0.50 -0.25 -0.89 +1.00 +0.11 -0.14

31 | (-2) (-2) (-2) (-3) (+4) (+2) (-2)

32 'p6' | +0.22 -0.42 +0.00 -1.00 +0.17 +1.00 -0.11

33 | (+2) (-2) (+1) (-2) (+2) (+4) (-2)

34 'p7' | +0.22 -0.50 +0.00 +0.00 +0.78 +0.42 +1.00

35 | (+2) (-2) (+1) (-1) (+3) (+2) (+4)

We may notice that all outranking situations, qualified at stability level +1 or -1, are now put to an indeterminate status. In the example here, we actually drop three positive outrankings: between p3 and p7, between p7 and p3, and between p6 and p3, where the last situation is already put to doubt by a veto situation (see Listing 3.9 Lines 22-35). We drop as well three negative outrankings: between p1 and p7, between p4 and p2, and between p7 and p4 (see Listing 3.9 Lines 22-35).

Notice by the way that outranking (resp. outranked) situations, although qualified at level +2 or +3 (resp. -2 or -3) may nevertheless be put to doubt by considerable performance differences. We may observe such an outranking situation when comparing, for instance, alternatives p2 and p4 (see Listing 3.9 Lines 24-25).

1>>> rg.showPairwiseComparison('p2','p4')

2 *------------ pairwise comparison ----*

3 Comparing actions : (p2, p4)

4 crit. wght. g(x) g(y) diff | ind pref r() | v veto

5 -------------------------------------------------------------------------

6 ec1 8.00 89.77 85.19 +4.58 | 5.00 10.00 +8.00 |

7 ec4 8.00 86.00 72.26 +13.74 | 5.00 10.00 +8.00 |

8 ec8 8.00 89.43 44.62 +44.81 | 5.00 10.00 +8.00 |

9 en3 6.00 20.79 80.81 -60.02 | 5.00 10.00 -6.00 | 60.00 -1.00

10 en5 6.00 23.83 49.69 -25.86 | 5.00 10.00 -6.00 |

11 en6 6.00 18.66 66.21 -47.55 | 5.00 10.00 -6.00 |

12 en9 6.00 26.65 50.92 -24.27 | 5.00 10.00 -6.00 |

13 so2 12.00 89.12 49.05 +40.07 | 5.00 10.00 +12.00 |

14 so7 12.00 84.73 55.57 +29.16 | 5.00 10.00 +12.00 |

15 Valuation in range: -72.00 to +72.00; global concordance: +24.00

Despite being robust, the apparent positive outranking situation between alternatives p2 and p4 is indeed put to doubt by a considerable counter-performance (-60.02) of p2 on criterion en3, a negative difference which exceeds slightly the assumed veto discrimination threshold v = 60.00 (see Listing 3.10 Line 9).

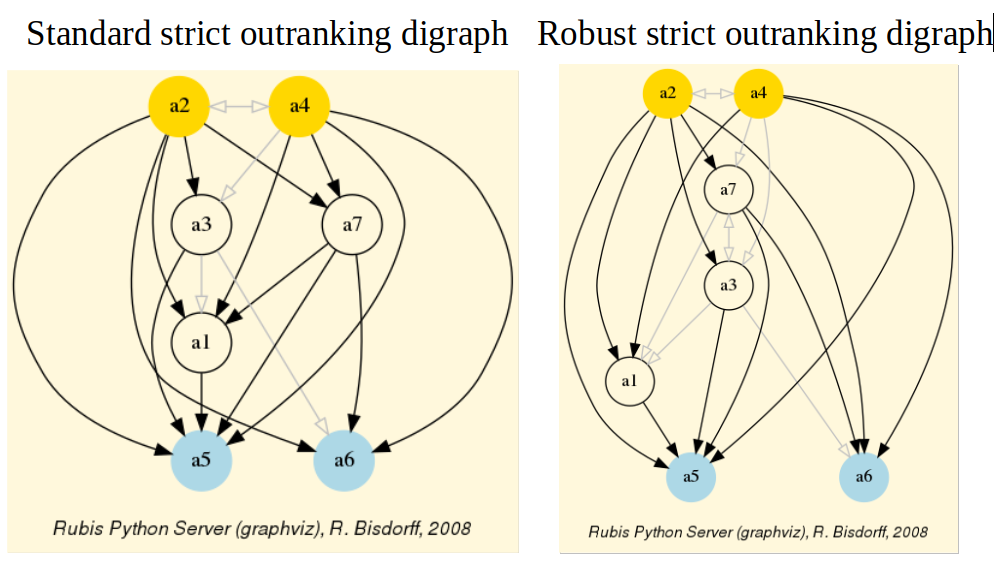

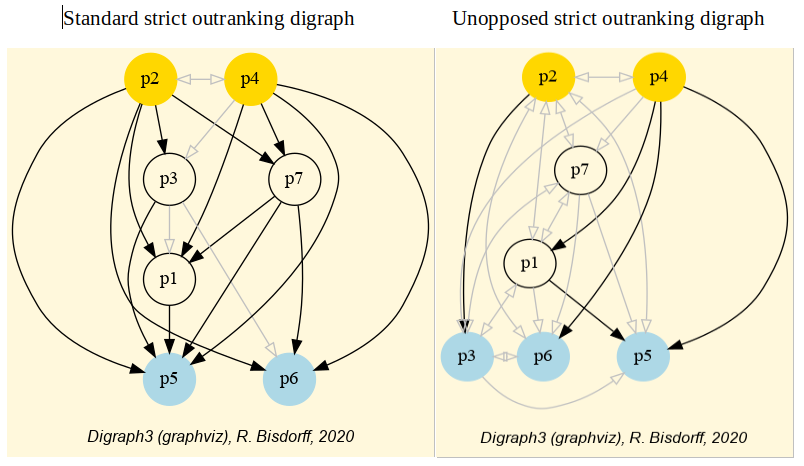

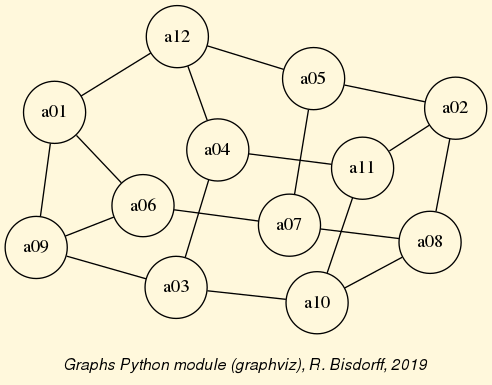

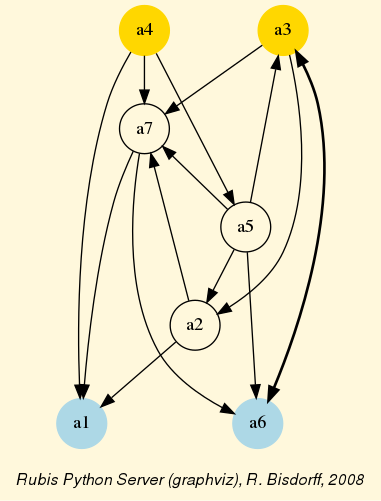

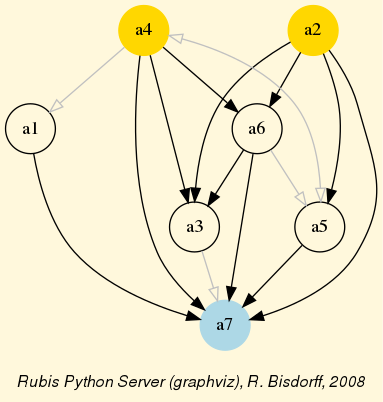

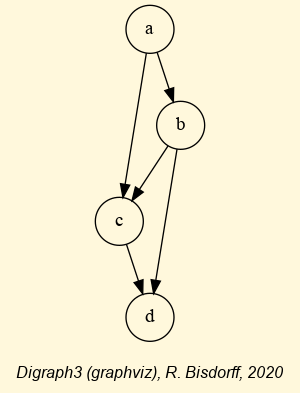

We may finally compare in Fig. 3.5 the standard and the robust version of the corresponding strict outranking digraphs, both oriented by their respective identical initial and terminal prekernels.

Fig. 3.5 Standard versus robust strict outranking digraphs oriented by their initial and terminal prekernels

The robust version drops two strict outranking situations: between p4 and p7 and between p7 and p1. The remaining 14 strict outranking (resp. outranked) situations are now all verified at a stability level of +2 and more (resp. -2 and less). They are, hence, only depending on potential significance weights that must respect the given significance preorder (see Listing 3.2).

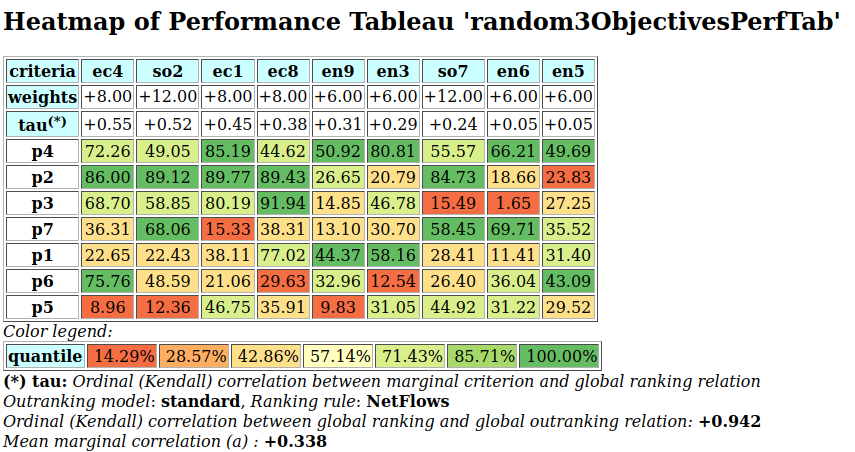

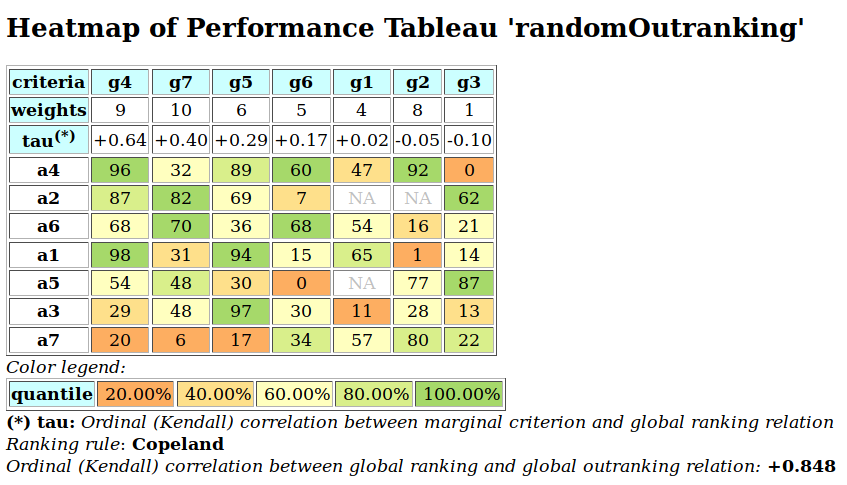

To appreciate the apparent orientation of the standard and robust strict outranking digraphs shown in Fig. 3.5, let us have a final heat map view on the underlying performance tableau ordered by the NetFlows ranking rule.

>>> t.showHTMLPerformanceHeatmap(Correlations=True,

... rankingRule='NetFlows')

Fig. 3.6 Heat map of the random 3 objectives performance tableau ordered by the NetFlows ranking rule

As the initial prekernel is here validated at stability level +2, recommending alternatives p4, as well as p2, as potential first choices, appears well justified. Alternative a4 represents indeed an overall best compromise choice between all decision objectives, whereas alternative p2 gives an unanimous best choice with respect to two out of three decision objectives. Up to the decision maker to make his final choice.

For concluding, let us mention that it is precisely again our bipolar-valued logical characteristic framework that provides us here with a first order distributional dominance test for effectively qualifying the stability level 2 robustness of an outranking digraph when facing performance tableaux with criteria of only ordinal-valued significance weights. A real world application of our stability analysis with such a kind of performance tableau may be consulted in [BIS-2015p].

Back to Content Table

3.1.3. On unopposed outrankings with multiple decision objectives

When facing a performance tableau involving multiple decision objectives, the robustness level +/-3, introduced in the previous Section, may lead to distinguishing what we call unopposed outranking situations, like the one shown between alternative p4 and p1 ( , see Listing 3.4 Line11), namely preferential situations that are more or less validated or invalidated by all the decision objectives.

, see Listing 3.4 Line11), namely preferential situations that are more or less validated or invalidated by all the decision objectives.

3.1.3.1. Characterising unopposed multiobjective outranking situations

Formally, we say that decision alternative x outranks decision alternative y unopposed when

x positively outranks y on one or more decision objective without x being positively outranked by y on any decision objective.

Dually, we say that decision alternative x does not outrank decision alternative y unopposed when

x is positively outranked by y on one or more decision objective without x outranking y on any decision objective.

Let us reconsider, for instance, the previous performance tableau with three decision objectives (see Listing 3.1):

1>>> from randomPerfTabs import\

2... Random3ObjectivesPerformanceTableau

3

4>>> t = Random3ObjectivesPerformanceTableau(

5... numberOfActions=7,

6... numberOfCriteria=9,seed=102)

7

8>>> t.showObjectives()

9 *------ show objectives -------"

10 Eco: Economical aspect

11 ec1 criterion of objective Eco 8

12 ec4 criterion of objective Eco 8

13 ec8 criterion of objective Eco 8

14 Total weight: 24.00 (3 criteria)

15 Soc: Societal aspect

16 so2 criterion of objective Soc 12

17 so7 criterion of objective Soc 12

18 Total weight: 24.00 (2 criteria)

19 Env: Environmental aspect

20 en3 criterion of objective Env 6

21 en5 criterion of objective Env 6

22 en6 criterion of objective Env 6

23 en9 criterion of objective Env 6

24 Total weight: 24.00 (4 criteria)

We notice in this example three decision objectives of equal importance (see Listing 3.11 Lines 10,15,19). What will be the outranking situations that are positively (resp. negatively) validated for each one of the decision objectives taken individually ?

We may obtain such unopposed multiobjective outranking situations by operating an epistemic o-average fusion (see the ~digraphsTools.symmetricAverage method) of the marginal outranking digraphs restricted to the coalition of criteria supporting each one of the decision objectives (see Listing 3.12 below).

1>>> from outrankingDigraphs import BipolarOutrankingDigraph

2>>> geco = BipolarOutrankingDigraph(t,objectivesSubset=['Eco'])

3>>> gsoc = BipolarOutrankingDigraph(t,objectivesSubset=['Soc'])

4>>> genv = BipolarOutrankingDigraph(t,objectivesSubset=['Env'])

5>>> from digraphs import FusionLDigraph

6>>> objectiveWeights = \

7... [t.objectives[obj]['weight'] for obj in t.objectives]

8

9>>> uopg = FusionLDigraph([geco,gsoc,genv],

10... operator='o-average',

11... weights=objectiveWeights)

12

13>>> uopg.showRelationTable(ReflexiveTerms=False)

14* ---- Relation Table -----

15 r | 'p1' 'p2' 'p3' 'p4' 'p5' 'p6' 'p7'

16-----|------------------------------------------------------------

17'p1' | - +0.00 +0.00 -0.69 +0.39 +0.11 +0.00

18'p2' | +0.00 - +0.83 +0.00 +0.00 +0.00 +0.00

19'p3' | +0.00 -0.33 - +0.00 +0.50 +0.00 +0.00

20'p4' | +0.78 +0.00 +0.61 - +1.00 +1.00 +0.67

21'p5' | -0.11 +0.00 +0.00 -0.89 - +0.11 +0.00

22'p6' | +0.00 +0.00 +0.00 -0.44 +0.17 - +0.00

23'p7' | +0.00 +0.00 +0.00 +0.00 +0.78 +0.42 -

24Valuation domain: [-1.000; 1.000]

Positive (resp. negative)  characteristic values, like

characteristic values, like  (see Listing 3.12 Line 17), show hence only outranking situations being validated (resp. invalidated) by one or more decision objectives without being invalidated (resp. validated) by any other decision objective.

(see Listing 3.12 Line 17), show hence only outranking situations being validated (resp. invalidated) by one or more decision objectives without being invalidated (resp. validated) by any other decision objective.

For easily computing this kind of unopposed multiobjective outranking digraphs, the outrankingDigraphs module conveniently provides a corresponding UnOpposedBipolarOutrankingDigraph constructor.

1>>> from outrankingDigraphs import\

2... UnOpposedBipolarOutrankingDigraph

3

4>>> uopg = UnOpposedBipolarOutrankingDigraph(t)

5>>> uopg

6 *------- Object instance description ------*

7 Instance class : UnOpposedBipolarOutrankingDigraph

8 Instance name : unopposed_outrankings

9 # Actions : 7

10 # Criteria : 9

11 Size : 13

12 Oppositeness (%) : 43.48

13 Determinateness (%) : 61.71

14 Valuation domain : [-1.00;1.00]

15 Attributes : ['name', 'actions', 'valuationdomain', 'objectives',

16 'criteria', 'methodData', 'evaluation', 'order',

17 'runTimes', 'relation', 'marginalRelationsRelations',

18 'gamma', 'notGamma']

19>>> uopg.computeOppositeness(InPercents=True)

20 {'standardSize': 23, 'unopposedSize': 13,

21 'oppositeness': 43.47826086956522}

The resulting unopposed outranking digraph keeps in fact 13 (see Listing 3.13 Lines 12-13) out of the 23 positively validated standard outranking situations, leading to a degree of oppositeness -preferential disagreement between decision objectives- of  .

.

We may now, for instance, verify the unopposed status of the outranking situation observed between alternatives p1 and p5.

1>>> uopg.showPairwiseComparison('p1','p5')

2 *------------ pairwise comparison ----*

3 Comparing actions : (p1, p5)

4 crit. wght. g(x) g(y) diff | ind pref r() |

5 ec1 8.00 38.11 46.75 -8.64 | 5.00 10.00 +0.00 |

6 ec4 8.00 22.65 8.96 +13.69 | 5.00 10.00 +8.00 |

7 ec8 8.00 77.02 35.91 +41.11 | 5.00 10.00 +8.00 |

8 en3 6.00 58.16 31.05 +27.11 | 5.00 10.00 +6.00 |

9 en5 6.00 31.40 29.52 +1.88 | 5.00 10.00 +6.00 |

10 en6 6.00 11.41 31.22 -19.81 | 5.00 10.00 -6.00 |

11 en9 6.00 44.37 9.83 +34.54 | 5.00 10.00 +6.00 |

12 so2 12.00 22.43 12.36 +10.07 | 5.00 10.00 +12.00 |

13 so7 12.00 28.41 44.92 -16.51 | 5.00 10.00 -12.00 |

14 Valuation in range: -72.00 to +72.00; global concordance: +28.00

In Listing 3.14 we see that alternative p1 does indeed positively outrank alternative p5 from the economic perspective ( ) as well as from the environmental perspective (

) as well as from the environmental perspective ( ). Whereas, from the societal perspective, both alternatives appear incomparable (

). Whereas, from the societal perspective, both alternatives appear incomparable ( ).

).

When fixed proportional criteria significance weights per objective are given, these outranking situations appear hence stable with respect to all possible importance weights we could allocate to the decision objectives.

This gives way for computing multiobjective choice recommendations.

3.1.3.2. Computing unopposed multiobjective choice recommendations

Indeed, best choice recommendations, computed from an unopposed multiobjective outranking digraph, will in fact deliver efficient choice recommendations.

1>>> uopg.showBestChoiceRecommendation()

2 Best choice recommendation(s) (BCR)

3 (in decreasing order of determinateness)

4 Credibility domain: [-1.00,1.00]

5 === >> potential first choice(s)

6 choice : ['p2', 'p4', 'p7']

7 independence : 0.00

8 dominance : 0.33

9 absorbency : 0.00

10 covering (%) : 33.33

11 determinateness (%) : 50.00

12 === >> potential last choice(s)

13 choice : ['p3', 'p5', 'p6', 'p7']

14 independence : 0.00

15 dominance : -0.61

16 absorbency : 0.11

17 covered (%) : 33.33

18 determinateness (%) : 50.00

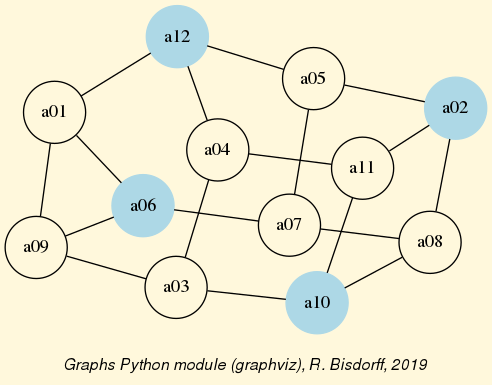

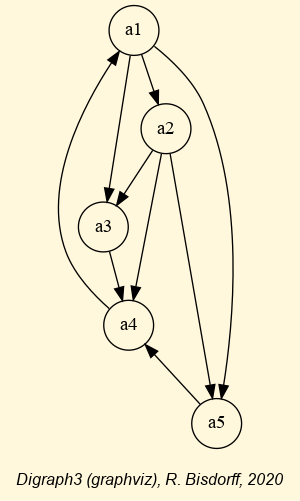

Our previous robust best choice recommendation (p2 and p4, see Fig. 3.5) remains, in this example here, stable. We recover indeed the best choice recommendation [‘p2’, ‘p4’, ‘p7’] (see Listing 3.15 Line 6). Yet, notice that decision alternative p7 appears to be at the same time a potential first as well as a potential last choice recommendation (see Line 13), a consequence of p7 being completely incomparable to the other decision alternatives when restricting the comparability to only unopposed strict outranking situations.

We may visualize this kind of efficient choice recommendation in Fig. 3.7 below.

1>>> (~(-uopg)).exportGraphViz(fileName = 'unopDigraph',

2... firstChoice = ['p2', 'p4'],

3... lastChoice = ['p3', 'p5', 'p6'])

4 *---- exporting a dot file for GraphViz tools ---------*

5 Exporting to unopDigraph.dot

6 dot -Grankdir=BT -Tpng unopDigraph.dot -o unopDigraph.png

Fig. 3.7 Standard versus unopposed strict outranking digraphs oriented by first and last choice recommendations

In order to make now an eventual best unique choice, a decision maker will necessarily have to weight, in a second stage of the decision aiding process, the relative importance of the individual decision objectives (see tutorial on computing a best choice recommendation).

Back to Content Table

3.3. Theoretical and computational advancements

3.3.1. Coping with missing data and indeterminateness

In a stubborn keeping with a two-valued logic, where every argument can only be true or false, there is no place for efficiently taking into account missing data or logical indeterminateness. These cases are seen as problematic and, at best are simply ignored. Worst, in modern data science, missing data get often replaced with fictive values, potentially falsifying hence all subsequent computations.

In social choice problems like elections, abstentions are, however, frequently observed and represent a social expression that may be significant for revealing non represented social preferences.

In marketing studies, interviewees will not always respond to all the submitted questions. Again, such abstentions do sometimes contain nevertheless valid information concerning consumer preferences.

3.3.1.1. A motivating data set

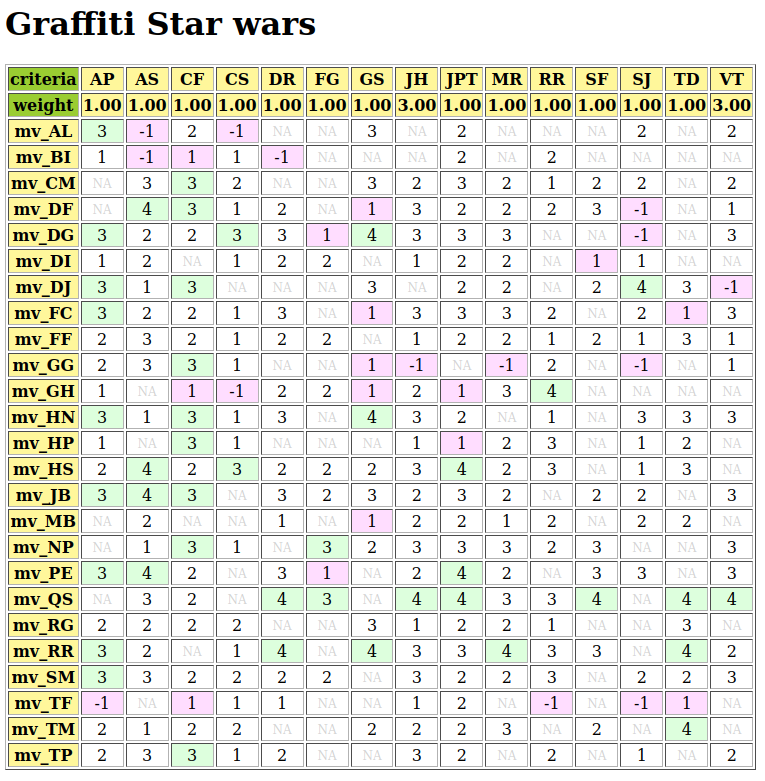

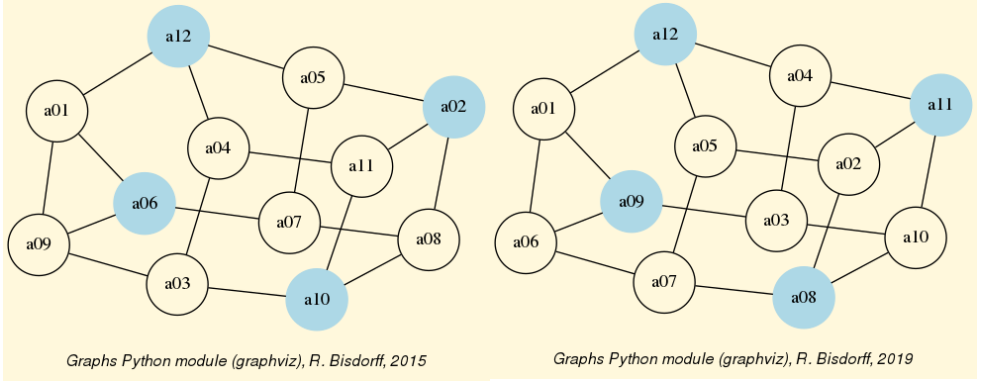

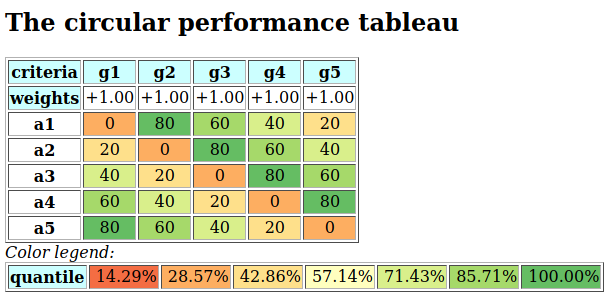

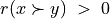

Let us consider such a performance tableau in file graffiti07.py gathering a Movie Magazine ‘s rating of some movies that could actually be seen in town [1] (see Fig. 3.14).

1>>> from perfTabs import PerformanceTableau

2>>> t = PerformanceTableau('graffiti07')

3>>> t.showHTMLPerformanceTableau(title='Graffiti Star wars',

4... ndigits=0)

Fig. 3.14 Graffiti magazine’s movie ratings from September 2007

15 journalists and movie critics provide here their rating of 25 movies: 5 stars (masterpiece), 4 stars (must be seen), 3 stars (excellent), 2 stars (good), 1 star (could be seen), -1 star (I do not like), -2 (I hate), NA (not seen).

To aggregate all the critics’ rating opinions, the Graffiti magazine provides for each movie a global score computed as an average grade, just ignoring the not seen data. These averages are thus not computed on comparable denominators; some critics do indeed use a more or less extended range of grades. The movies not seen by critic SJ, for instance, are favored, as this critic is more severe than others in her grading. Dropping the movies that were not seen by all the critics is here not possible either, as no one of the 25 movies was actually seen by all the critics. Providing any value for the missing data will as well always somehow falsify any global value scoring. What to do ?

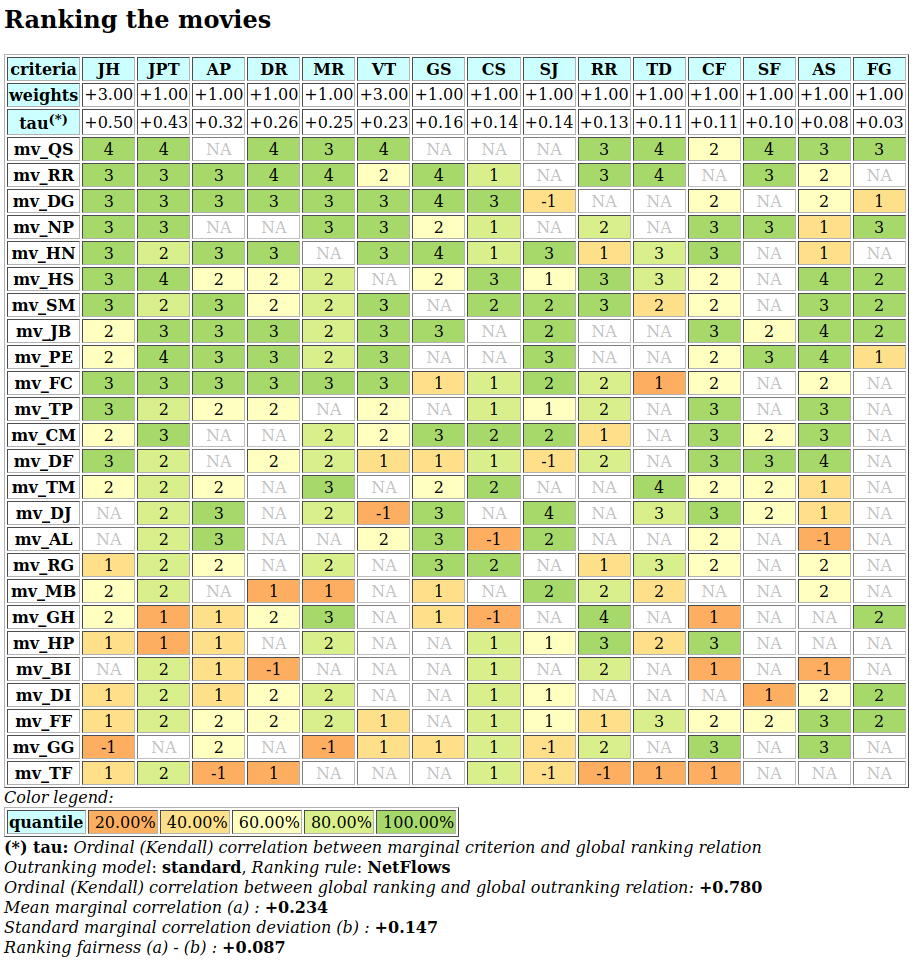

A better approach is to rank the movies on the basis of pairwise bipolar-valued at least as well rated as opinions. Under this epistemic argumentation approach, missing data are naturally treated as opinion abstentions and hence do not falsify the logical computations. Such a ranking (see the tutorial on Ranking with incommensurable performance criteria) of the 25 movies is provided, for instance, by the heat map view shown in Fig. 3.15.

>>> t.showHTMLPerformanceHeatmap(Correlations=True,

... rankingRule='NetFlows',

... ndigits=0)

Fig. 3.15 Graffiti magazine’s ordered movie ratings from September 2007

There is no doubt that movie mv_QS, with 6 ‘must be seen’ marks, is correctly best-ranked and the movie mv_TV is worst-ranked with five ‘don’t like’ marks.

3.3.1.2. Modelling pairwise bipolar-valued rating opinions

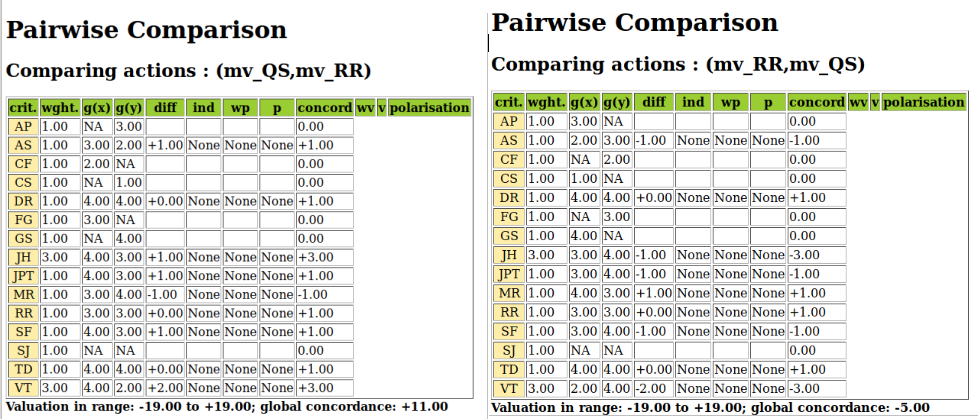

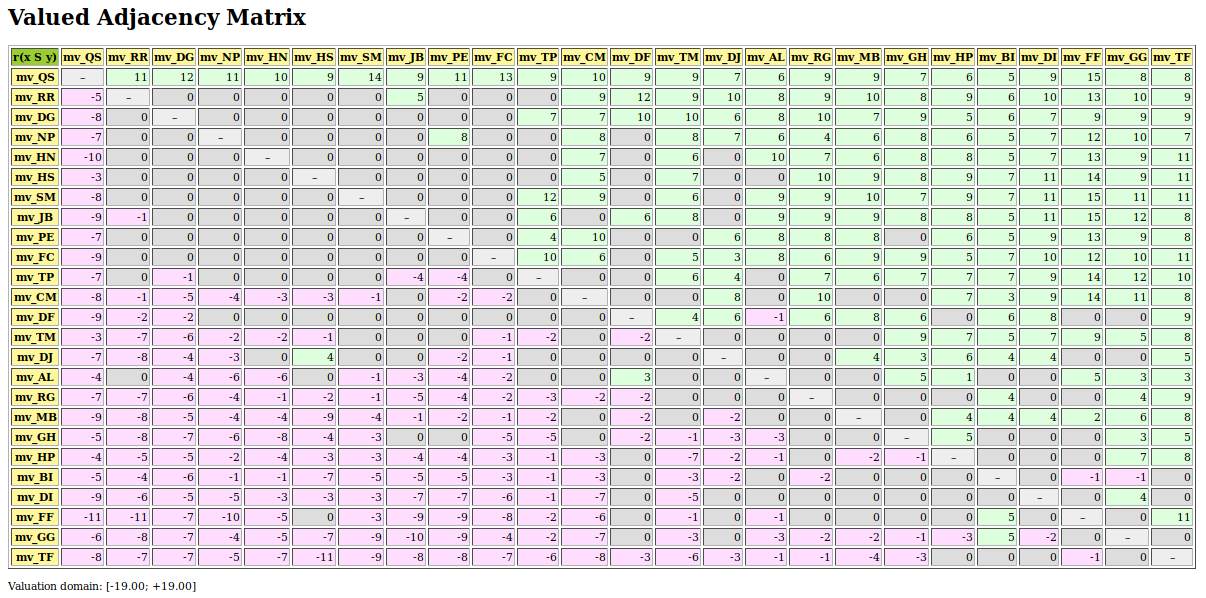

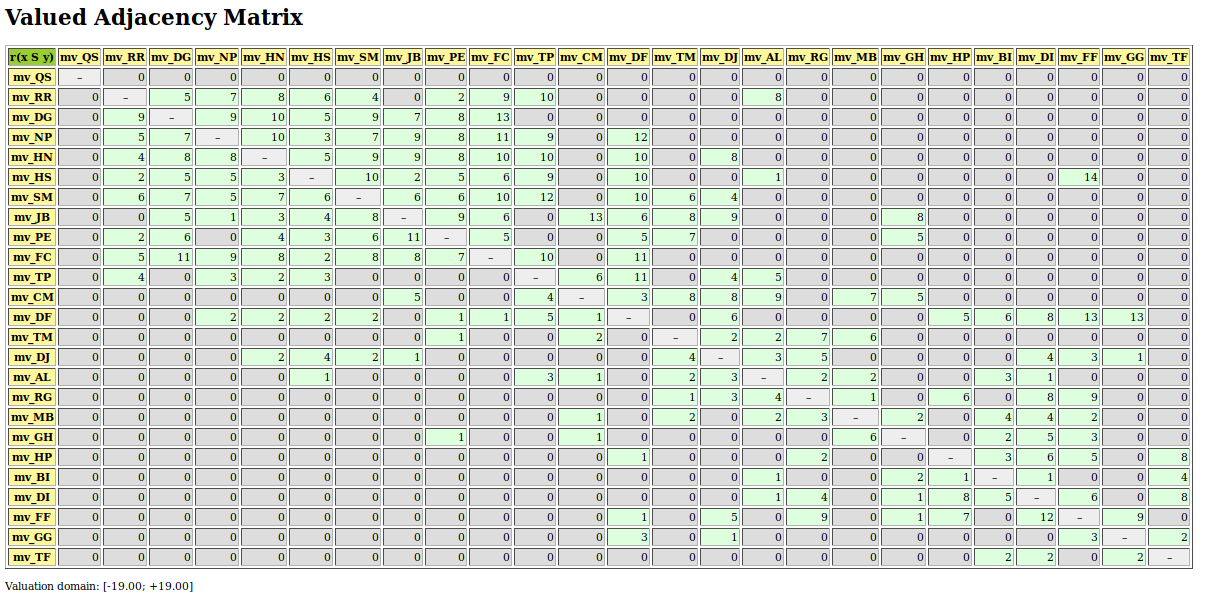

Let us explicitly construct the underlying bipolar-valued outranking digraph and consult in Fig. 3.16 the pairwise characteristic values we observe between the two best-ranked movies, namely mv_QS and mv_RR.

1>>> from outrankingDigraphs import BipolarOutrankingDigraph

2>>> g = BipolarOutrankingDigraph(t)

3>>> g.recodeValuation(-19,19) # integer characteristic values

4>>> g.showHTMLPairwiseOutrankings('mv_QS','mv_RR')

Fig. 3.16 Pairwise comparison of the two best-ranked movies

Six out of the fifteen critics have not seen one or the other of these two movies. Notice the higher significance (3) that is granted to two locally renowned movie critics, namely JH and VT. Their opinion counts for three times the opinion of the other critics. All nine critics that have seen both movies, except critic MR, state that mv_QS is rated at least as well as mv_RR and the balance of positive against negative opinions amounts to +11, a characteristic value which positively validates the outranking situation with a majority of (11/19 + 1.0) / 2.0 = 79%.

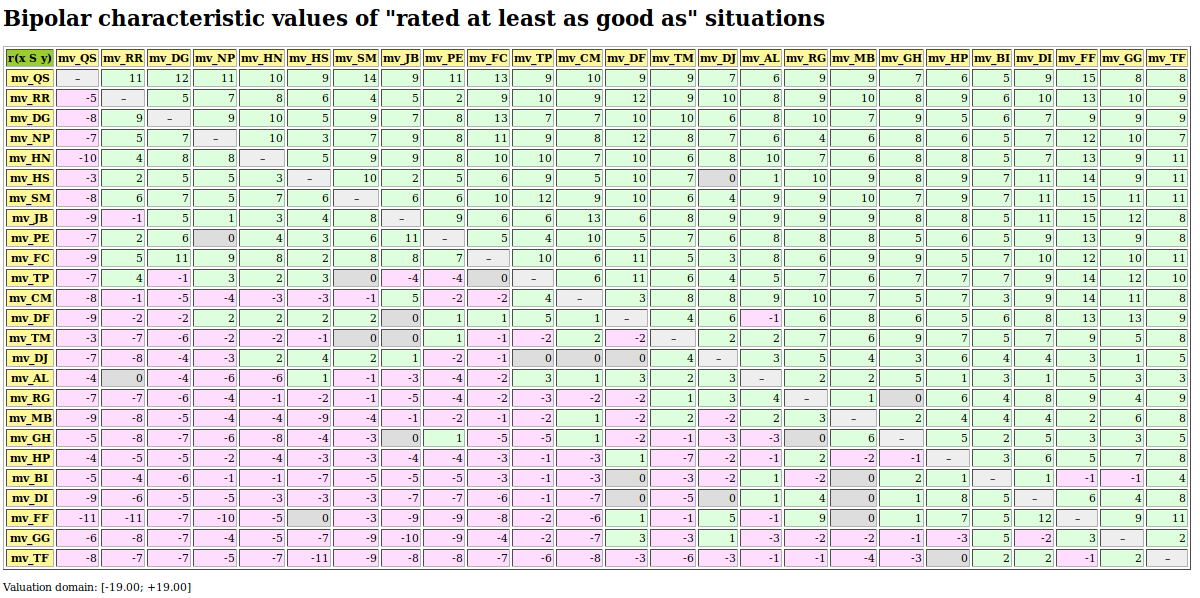

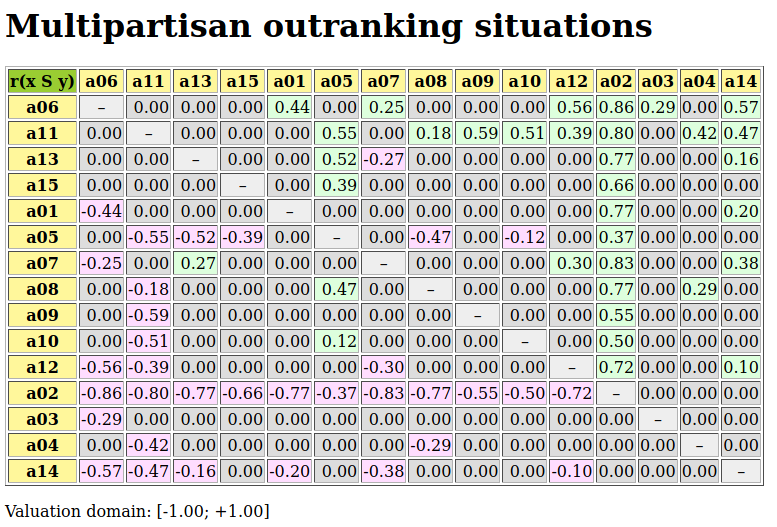

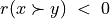

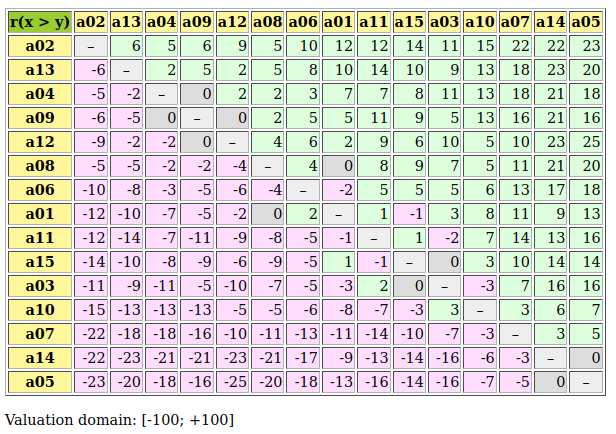

The complete table of pairwise majority margins of global ‘at least as well rated as’ opinions, ranked by the same rule as shown in the heat map above (see Fig. 3.15), may be shown in Fig. 3.17.

1>>> ranking = g.computeNetFlowsRanking()

2>>> g.showHTMLRelationTable(actionsList=ranking, ndigits=0,

3... tableTitle='Bipolar characteristic values of\

4... "rated at least as good as" situations')

Fig. 3.17 Pairwise majority margins of ‘at least as well rated as’ rating opinions

Positive characteristic values, validating a global ‘at least as well rated as’ opinion are marked in light green (see Fig. 3.17). Whereas negative characteristic values, invalidating such a global opinion, are marked in light red. We may by the way notice that the best-ranked movie mv_QS is indeed a Condorcet winner, i.e. better rated than all the other movies by a 65% majority of critics. This majority may be assessed from the average determinateness of the given bipolar-valued outranking digraph g.

>>> print( '%.0f%%' % g.computeDeterminateness(InPercents=True) )

65%

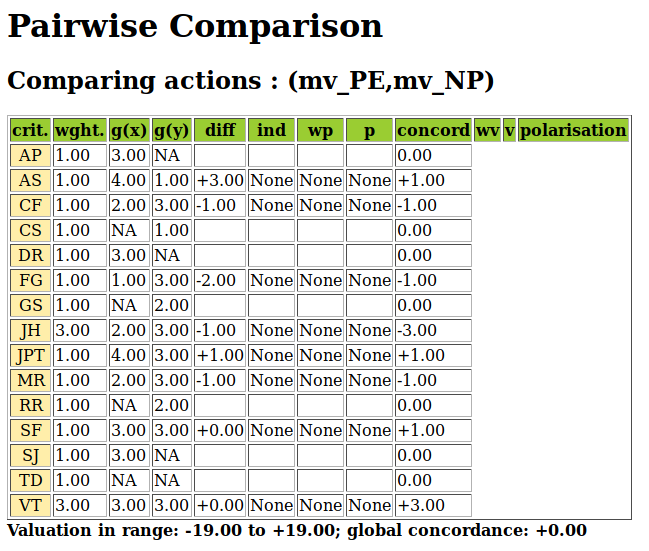

Notice also the indeterminate situation we observe, for instance, when comparing movie mv_PE with movie mv_NP.

>>> g.showHTMLPairwiseComparison('mv_PE','mv_NP')

Fig. 3.18 Indeterminate pairwise comparison example

Only eight, out of the fifteen critics, have seen both movies and the positive opinions do neatly balance the negative ones. A global statement that mv_PE is ‘at least as well rated as’ mv_NP may in this case hence neither be validated, nor invalidated; a preferential situation that cannot be modelled with any scoring approach.

It is fair, however, to eventually mention here that the Graffiti magazine’s average scoring method is actually showing a very similar ranking. Indeed, average scores usually confirm well all evident pairwise comparisons, yet enforce comparability for all less evident ones.

Notice finally the ordinal correlation tau values in Fig. 3.15 3rd row. How may we compute these ordinal correlation indexes ?

Back to Content Table

3.3.2. Ordinal correlation equals bipolar-valued relational equivalence

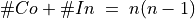

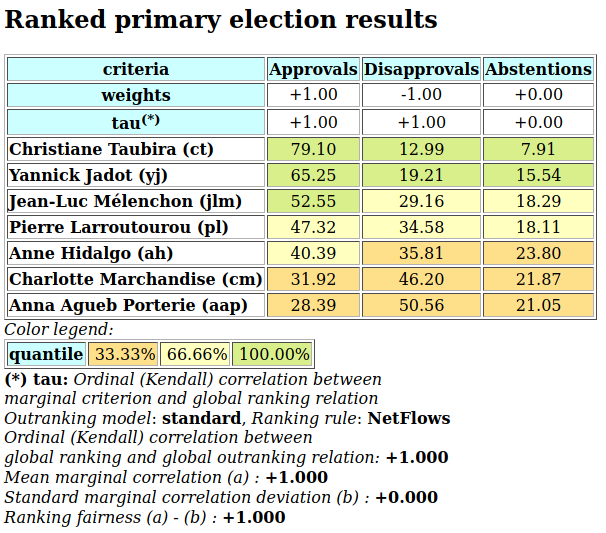

3.3.2.1. Kendall’s tau index

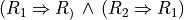

M. G. Kendall ([KEN-1938p]) defined his ordinal correlation  (tau) index for linear orders of dimension n as a balancing of the number #Co of correctly oriented pairs against the number #In of incorrectly oriented pairs. The total number of irreflexive pairs being n(n-1), in the case of linear orders,

(tau) index for linear orders of dimension n as a balancing of the number #Co of correctly oriented pairs against the number #In of incorrectly oriented pairs. The total number of irreflexive pairs being n(n-1), in the case of linear orders,  . Hence

. Hence  . In case #In is zero,

. In case #In is zero,  (all pairs are equivalently oriented); inversely, in case #Co is zero,

(all pairs are equivalently oriented); inversely, in case #Co is zero,  (all pairs are differently oriented).

(all pairs are differently oriented).

Noticing that  , and recalling that the bipolar-valued negation is operated by changing the sign of the characteristic value, Kendall’s original tau definition implemented in fact the bipolar-valued negation of the non equivalence of two linear orders:

, and recalling that the bipolar-valued negation is operated by changing the sign of the characteristic value, Kendall’s original tau definition implemented in fact the bipolar-valued negation of the non equivalence of two linear orders:

i.e. the normalized majority margin of equivalently oriented irreflexive pairs.

Let R1 and R2 be two random crisp relations defined on a same set of 5 alternatives. We may compute Kendall’s tau index as follows.

1>>> from randomDigraphs import RandomDigraph

2>>> R1 = RandomDigraph(order=5,Bipolar=True)

3>>> R2 = RandomDigraph(order=5,Bipolar=True)

4>>> from digraphs import EquivalenceDigraph

5>>> E = EquivalenceDigraph(R1,R2)

6>>> E.showRelationTable(ReflexiveTerms=False)

7 * ---- Relation Table -----

8 r(<=>)| 'a1' 'a2' 'a3' 'a4' 'a5'

9 ------|-------------------------------------------

10 'a1' | - -1.00 1.00 -1.00 1.00

11 'a2' | -1.00 - -1.00 1.00 -1.00

12 'a3' | -1.00 -1.00 - 1.00 1.00

13 'a4' | -1.00 1.00 -1.00 - 1.00

14 'a5' | -1.00 1.00 -1.00 1.00 -

15 Valuation domain: [-1.00;1.00]

16>>> E.correlation

17 {'correlation': -0.1, 'determination': 1.0}

In the table of the equivalence relation  above (see Listing 3.34 Lines 10-14), we observe that the normalized majority margin of equivalent versus non equivalent irreflexive pairs amounts to (9 - 11)/20 = -0.1, i.e. the value of Kendall’s tau index in this plainly determined crisp case (see Listing 3.34 Line 17).

above (see Listing 3.34 Lines 10-14), we observe that the normalized majority margin of equivalent versus non equivalent irreflexive pairs amounts to (9 - 11)/20 = -0.1, i.e. the value of Kendall’s tau index in this plainly determined crisp case (see Listing 3.34 Line 17).

What happens now with more or less determined and even partially indeterminate relations ? May we proceed in a similar way ?

3.3.2.2. Bipolar-valued relational equivalence

Let us now consider two randomly bipolar-valued digraphs R1 and R2 of order five.

1>>> R1 = RandomValuationDigraph(order=5,seed=1)

2>>> R1.showRelationTable(ReflexiveTerms=False)

3 * ---- Relation Table -----

4 r(R1)| 'a1' 'a2' 'a3' 'a4' 'a5'

5 ------|-------------------------------------------

6 'a1' | - -0.66 0.44 0.94 -0.84

7 'a2' | -0.36 - -0.70 0.26 0.94

8 'a3' | 0.14 0.20 - 0.66 -0.04

9 'a4' | -0.48 -0.76 0.24 - -0.94

10 'a5' | -0.02 0.10 0.54 0.94 -

11 Valuation domain: [-1.00;1.00]

12>>> R2 = RandomValuationDigraph(order=5,seed=2)

13>>> R2.showRelationTable(ReflexiveTerms=False)

14 * ---- Relation Table -----

15 r(R2)| 'a1' 'a2' 'a3' 'a4' 'a5'

16 ------|-------------------------------------------

17 'a1' | - -0.86 -0.78 -0.80 -0.08

18 'a2' | -0.58 - 0.88 0.70 -0.22

19 'a3' | -0.36 0.54 - -0.46 0.54

20 'a4' | -0.92 0.48 0.74 - -0.60

21 'a5' | 0.10 0.62 0.00 0.84 -

22 Valuation domain: [-1.00;1.00]

We may notice in the relation tables shown above that 9 pairs, like (a1,a2) or (a3,a2) for instance, appear equivalently oriented (see Listing 3.35 Lines 6,17 or 8,19). The EquivalenceDigraph class implements this relational equivalence relation between digraphs R1 and R2 (see Listing 3.36).

1>>> eq = EquivalenceDigraph(R1,R2)

2>>> eq.showRelationTable(ReflexiveTerms=False)

3 * ---- Relation Table -----

4 r(<=>)| 'a1' 'a2' 'a3' 'a4' 'a5'

5 ------|-------------------------------------------

6 'a1' | - 0.66 -0.44 -0.80 0.08

7 'a2' | 0.36 - -0.70 0.26 -0.22

8 'a3' | -0.14 0.20 - -0.46 -0.04

9 'a4' | 0.48 -0.48 0.24 - 0.60

10 'a5' | -0.02 0.10 0.00 0.84 -

11 Valuation domain: [-1.00;1.00]

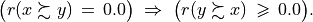

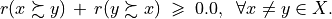

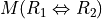

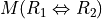

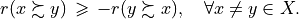

In our bipolar-valued epistemic logic, logical disjunctions and conjunctions are implemented as max, respectively min operators. Notice also that the logical equivalence  corresponds to a double implication

corresponds to a double implication  and that the implication

and that the implication  is logically equivalent to the disjunction

is logically equivalent to the disjunction  .

.

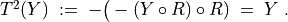

When  and

and  denote the bipolar-valued characteristic values of relation R1, resp. R2, we may hence compute as follows a majority margin

denote the bipolar-valued characteristic values of relation R1, resp. R2, we may hence compute as follows a majority margin  between equivalently and not equivalently oriented irreflexive pairs (x,y).

between equivalently and not equivalently oriented irreflexive pairs (x,y).

is thus given by the sum of the non reflexive terms of the relation table of eq, the relation equivalence digraph computed above (see Listing 3.36).

is thus given by the sum of the non reflexive terms of the relation table of eq, the relation equivalence digraph computed above (see Listing 3.36).

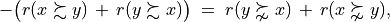

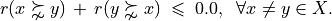

In the crisp case,  is now normalized with the maximum number of possible irreflexive pairs, namely n(n-1). In a generalized r-valued case, the maximal possible equivalence majority margin M corresponds to the sum D of the conjoint determinations of

is now normalized with the maximum number of possible irreflexive pairs, namely n(n-1). In a generalized r-valued case, the maximal possible equivalence majority margin M corresponds to the sum D of the conjoint determinations of  and

and  (see [BIS-2012p]).

(see [BIS-2012p]).

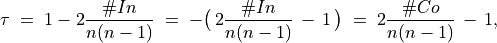

Thus, we obtain in the general r -valued case:

corresponds thus to a classical ordinal correlation index, but restricted to the conjointly determined parts of the given relations R1 and R2. In the limit case of two crisp linear orders, D equals n(n-1), i.e. the number of irreflexive pairs, and we recover hence Kendall ‘s original tau index definition.

corresponds thus to a classical ordinal correlation index, but restricted to the conjointly determined parts of the given relations R1 and R2. In the limit case of two crisp linear orders, D equals n(n-1), i.e. the number of irreflexive pairs, and we recover hence Kendall ‘s original tau index definition.

It is worthwhile noticing that the ordinal correlation index  we obtain above corresponds to the ratio of

we obtain above corresponds to the ratio of

: the normalized majority margin of the pairwise relational equivalence statements, also called valued ordinal correlation, and

: the normalized determination of the corresponding pairwise relational equivalence statements, in fact the determinateness of the relational equivalence digraph.

We have thus successfully out-factored the determination effect from the correlation effect. With completely determined relations,  . By convention, we set the ordinal correlation with a completely indeterminate relation, i.e. when D = 0, to the indeterminate correlation value 0.0. With uniformly chosen random r-valued relations, the expected tau index is 0.0, denoting in fact an indeterminate correlation. The corresponding expected normalized determination d is about 0.333 (see [BIS-2012p]).

. By convention, we set the ordinal correlation with a completely indeterminate relation, i.e. when D = 0, to the indeterminate correlation value 0.0. With uniformly chosen random r-valued relations, the expected tau index is 0.0, denoting in fact an indeterminate correlation. The corresponding expected normalized determination d is about 0.333 (see [BIS-2012p]).

We may verify these relations with help of the corresponding equivalence digraph eq (see Listing 3.37).

1>>> eq = EquivalenceDigraph(R1,R2)

2>>> M = Decimal('0'); D = Decimal('0')

3>>> n2 = eq.order*(eq.order - 1)

4>>> for x in eq.actions:

5... for y in eq.actions:

6... if x != y:

7... M += eq.relation[x][y]

8... D += abs(eq.relation[x][y])

9>>> print('r(R1<=>R2) = %+.3f, d = %.3f, tau = %+.3f' % (M/n2,D/n2,M/D))

10

11 r(R1<=>R2) = +0.026, d = 0.356, tau = +0.073

In general we simply use the computeOrdinalCorrelation() method which renders a dictionary with a ‘correlation’ (tau) and a ‘determination’ (d) attribute. We may recover r(<=>) by multiplying tau with d (see Listing 3.38 Line 4).

1>>> corrR1R2 = R1.computeOrdinalCorrelation(R2)

2>>> tau = corrR1R2['correlation']

3>>> d = corrR1R2['determination']

4>>> r = tau * d

5>>> print('tau(R1,R2) = %+.3f, d = %.3f,\

6... r(R1<=>R2) = %+.3f' % (tau, d, r))

7

8 tau(R1,R2) = +0.073, d = 0.356, r(R1<=>R2) = +0.026

We provide for convenience a direct showCorrelation() method:

1>>> corrR1R2 = R1.computeOrdinalCorrelation(R2)

2>>> R1.showCorrelation(corrR1R2)

3 Correlation indexes:

4 Extended Kendall tau : +0.073

5 Epistemic determination : 0.356

6 Bipolar-valued equivalence : +0.026

We may now illustrate the quality of the global ranking of the movies shown with the heat map in Fig. 3.15.

3.3.2.3. Fitness of ranking heuristics

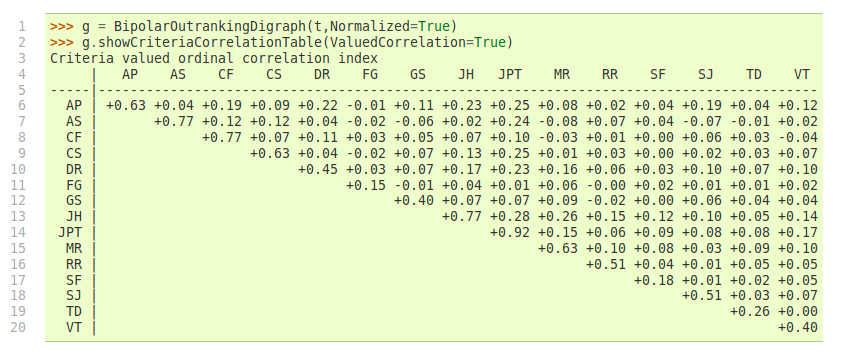

We reconsider the bipolar-valued outranking digraph g modelling the pairwise global ‘at least as well rated as’ relation among the 25 movies seen in the topic before (see Fig. 3.15).

1>>> from perfTabs import PerformanceTableau

2>>> t = PerformanceTableau('graffiti07')

3>>> from outrankingDigraphs import BipolarOutrankingDigraph

4>>> g = BipolarOutrankingDigraph(t,Normalized=True)

5 *------- Object instance description ------*

6 Instance class : BipolarOutrankingDigraph

7 Instance name : rel_grafittiPerfTab.xml

8 # Actions : 25

9 # Criteria : 15

10 Size : 390

11 Determinateness : 65%

12 Valuation domain : {'min': Decimal('-1.0'),

13 'med': Decimal('0.0'),

14 'max': Decimal('1.0'),}

15>>> g.computeCoSize()

16 188

Out of the 25 x 24 = 600 irreflexive movie pairs, digraph g contains 390 positively validated, 188 positively invalidated, and 22 indeterminate outranking situations (see the zero-valued cells in Fig. 3.17).

Let us now compute the normalized majority margin r(<=>) of the equivalence between the marginal critic’s pairwise ratings and the global NetFlows ranking shown in the ordered heat map (see Fig. 3.15).

1>>> from linearOrders import NetFlowsOrder

2>>> nf = NetFlowsOrder(g)

3>>> nf.netFlowsRanking

4 ['mv_QS', 'mv_RR', 'mv_DG', 'mv_NP', 'mv_HN', 'mv_HS', 'mv_SM',

5 'mv_JB', 'mv_PE', 'mv_FC', 'mv_TP', 'mv_CM', 'mv_DF', 'mv_TM',

6 'mv_DJ', 'mv_AL', 'mv_RG', 'mv_MB', 'mv_GH', 'mv_HP', 'mv_BI',

7 'mv_DI', 'mv_FF', 'mv_GG', 'mv_TF']

8>>> for i,item in enumerate(\

9... g.computeMarginalVersusGlobalRankingCorrelations(\

10... nf.netFlowsRanking,ValuedCorrelation=True) ):\

11... print('r(%s<=>nf) = %+.3f' % (item[1],item[0]) )

12

13 r(JH<=>nf) = +0.500

14 r(JPT<=>nf) = +0.430

15 r(AP<=>nf) = +0.323

16 r(DR<=>nf) = +0.263

17 r(MR<=>nf) = +0.247

18 r(VT<=>nf) = +0.227

19 r(GS<=>nf) = +0.160

20 r(CS<=>nf) = +0.140

21 r(SJ<=>nf) = +0.137

22 r(RR<=>nf) = +0.133

23 r(TD<=>nf) = +0.110

24 r(CF<=>nf) = +0.110

25 r(SF<=>nf) = +0.103

26 r(AS<=>nf) = +0.080

27 r(FG<=>nf) = +0.027

In Listing 3.40 (see Lines 13-27), we recover above the relational equivalence characteristic values shown in the third row of the table in Fig. 3.15. The global NetFlows ranking represents obviously a rather balanced compromise with respect to all movie critics’ opinions as there appears no valued negative correlation with anyone of them. The NetFlows ranking apparently takes also correctly in account that the journalist JH, a locally renowned movie critic, shows a higher significance weight (see Line 13).

The ordinal correlation between the global NetFlows ranking and the digraph g may be furthermore computed as follows:

1>>> corrgnf = g.computeOrdinalCorrelation(nf)

2>>> g.showCorrelation(corrgnf)

3 Correlation indexes:

4 Extended Kendall tau : +0.780

5 Epistemic determination : 0.300

6 Bipolar-valued equivalence : +0.234

We notice in Listing 3.41 Line 4 that the ordinal correlation tau(g,nf) index between the NetFlows ranking nf and the determined part of the outranking digraph g is quite high (+0.78). Due to the rather high number of missing data, the r -valued relational equivalence between the nf and the g digraph, with a characteristics value of only +0.234, may be misleading. Yet, +0.234 still corresponds to an epistemic majority support of nearly 62% of the movie critics’ rating opinions.

It would be interesting to compare similarly the correlations one may obtain with other global ranking heuristics, like the Copeland or the Kohler ranking rule.

3.3.2.4. Illustrating preference divergences

The valued relational equivalence index gives us a further measure for studying how divergent appear the rating opinions expressed by the movie critics.

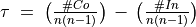

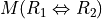

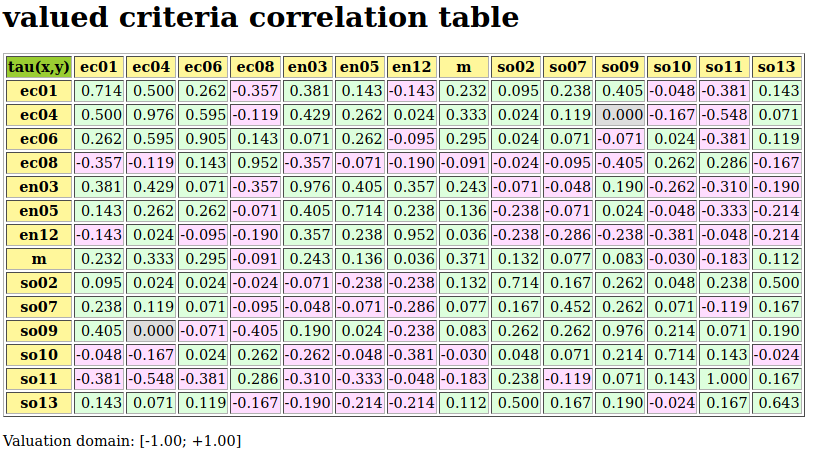

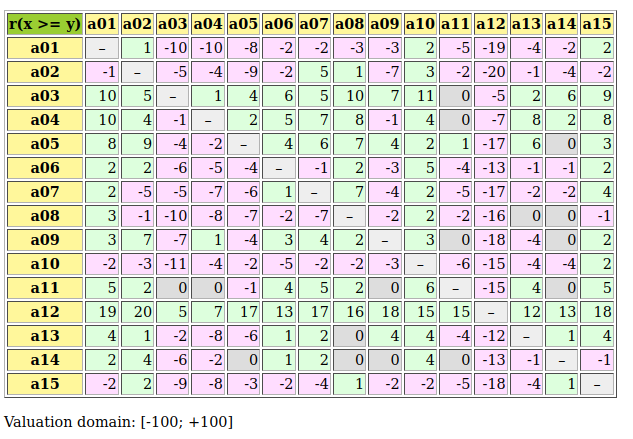

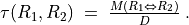

Fig. 3.19 Pairwise valued correlation of movie critics

It is remarkable to notice in the criteria correlation matrix (see Fig. 3.19) that, due to the quite numerous missing data, all pairwise valued ordinal correlation indexes r(x<=>y) appear to be of low value, except the diagonal ones. These reflexive indexes r(x<=>x) would trivially all amount to +1.0 in a plainly determined case. Here they indicate a reflexive normalized determination score d, i.e. the proportion of pairs of movies each critic did evaluate. Critic JPT (the editor of the Graffiti magazine), for instance, evaluated all but one (d = 24*23/600 = 0.92), whereas critic FG evaluated only 10 movies among the 25 in discussion (d = 10*9/600 = 0.15).

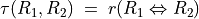

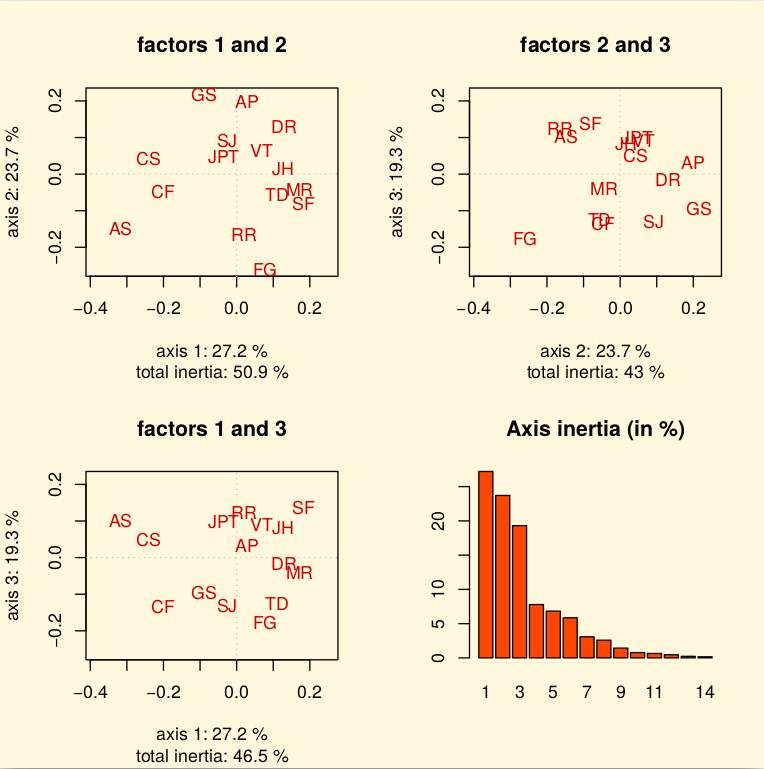

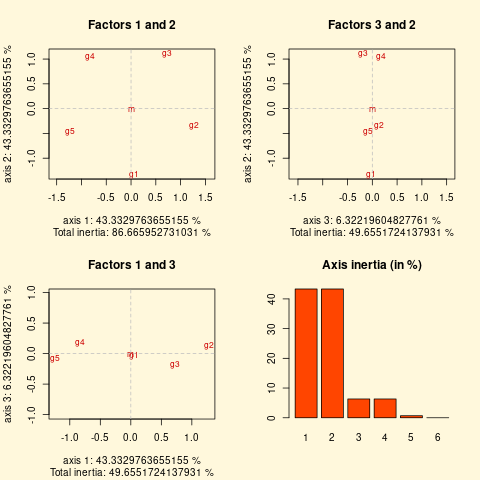

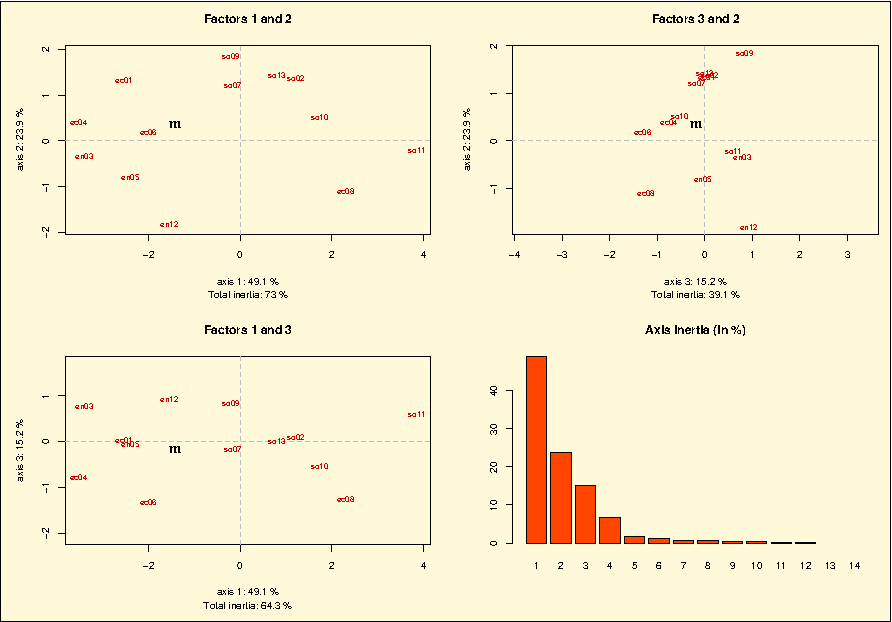

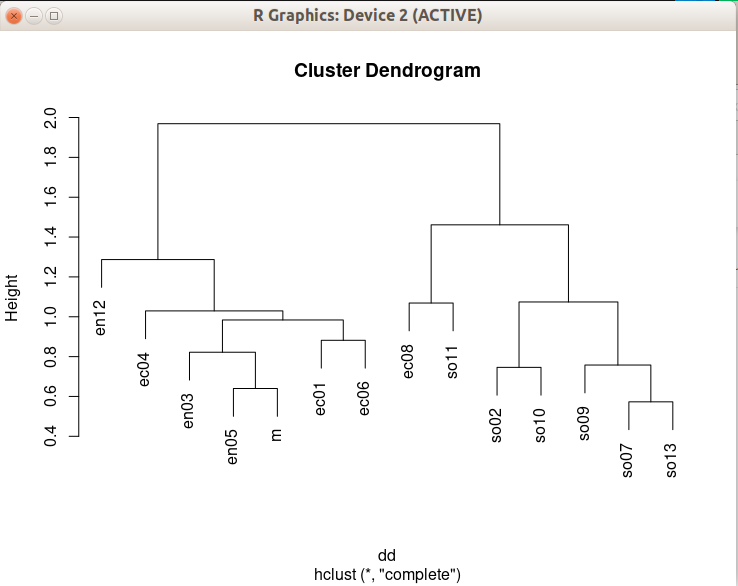

To get a picture of the actual divergence of rating opinions concerning jointly seen pairs of movies, we may develop a Principal Component Analysis ([2]) of the corresponding tau correlation matrix. The 3D plot of the first 3 principal axes is shown in Fig. 3.20.

>>> g.export3DplotOfCriteriaCorrelation(ValuedCorrelation=False)

Fig. 3.20 3D PCA plot of the criteria ordinal correlation matrix

The first 3 principal axes support together about 70% of the total inertia. Most eccentric and opposed in their respective rating opinions appear, on the first principal axis with 27.2% inertia, the conservative daily press against labour and public press. On the second principal axis with 23.7.7% inertia, it is the people press versus the cultural critical press. And, on the third axis with still 19.3% inertia, the written media appear most opposed to the radio media.

3.3.2.5. Exploring the better rated and the as well as rated opinions

In order to furthermore study the quality of a ranking result, it may be interesting to have a separate view on the asymmetrical and symmetrical parts of the ‘at least as well rated as’ opinions (see the tutorial on Manipulating Digraph objects).

Let us first have a look at the pairwise asymmetrical part, namely the ‘better rated than’ and ‘less well rated than’ opinions of the movie critics.

>>> from digraphs import AsymmetricPartialDigraph

>>> ag = AsymmetricPartialDigraph(g)

>>> ag.showHTMLRelationTable(actionsList=g.computeNetFlowsRanking(),ndigits=0)

Fig. 3.21 Asymmetrical part of graffiti07 digraph

We notice here that the NetFlows ranking rule inverts in fact just three ‘less well ranked than’ opinions and four ‘better ranked than’ ones. A similar look at the symmetric part, the pairwise ‘as well rated as’ opinions, suggests a preordered preference structure in several equivalently rated classes.

>>> from digraphs import SymmetricPartialDigraph

>>> sg = SymmetricPartialDigraph(g)

>>> sg.showHTMLRelationTable(actionsList=g.computeNetFlowsRanking(),ndigits=0)

Fig. 3.22 Symmetrical part of graffiti07 digraph

Such a preordering of the movies may, for instance, be computed with the computeRankingByChoosing() method, where we iteratively extract dominant kernels -remaining first choices- and absorbent kernels -remaining last choices- (see the tutorial on Computing Digraph Kernels). We operate therefore on the asymmetrical ‘better rated than’, i.e. the codual ([3]) of the ‘at least as well rated as’ opinions (see Listing 3.42 Line 2).

1>>> from transitiveDigraphs import RankingByChoosingDigraph

2>>> rbc = RankingByChoosingDigraph(g,CoDual=True)

3>>> rbc.showRankingByChoosing()

4 Ranking by Choosing and Rejecting

5 1st First Choice ['mv_QS']

6 2nd First Choice ['mv_DG', 'mv_FC', 'mv_HN', 'mv_HS', 'mv_NP',

7 'mv_PE', 'mv_RR', 'mv_SM']

8 3rd First Choice ['mv_CM', 'mv_JB', 'mv_TM']

9 4th First Choice ['mv_AL', 'mv_TP']

10 4th Last Choice ['mv_AL', 'mv_TP']

11 3rd Last Choice ['mv_GH', 'mv_MB', 'mv_RG']

12 2nd Last Choice ['mv_DF', 'mv_DJ', 'mv_FF', 'mv_GG']

13 1st Last Choice ['mv_BI', 'mv_DI', 'mv_HP', 'mv_TF']

Back to Content Table

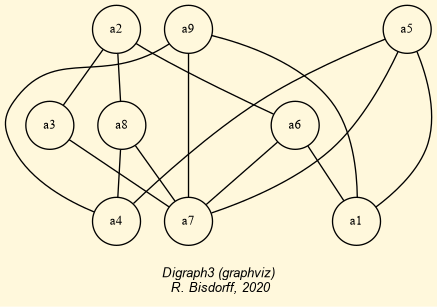

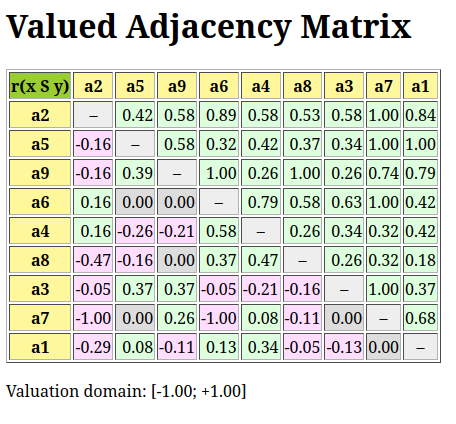

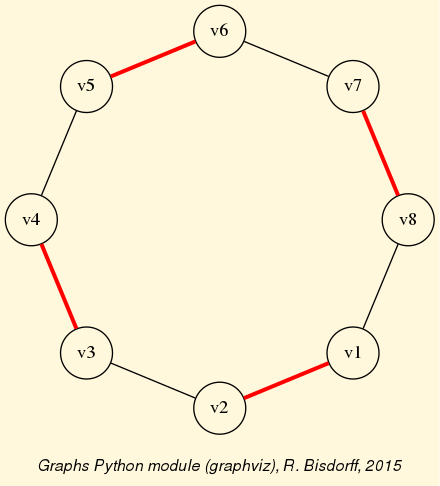

3.3.3. Applications of bipolar-valued base 3 encoded Bachet numbers

“The complexity of arithmetic circuitry for balanced ternary arithmetic is not much greater than it is for the binary system, and a given number requires only